Questioning why a few of your pages don’t present up in Google?

Crawlability issues may very well be the wrongdoer.

On this information, we’ll cowl what crawlability issues are, how they have an effect on search engine marketing, and easy methods to repair them.

Let’s get began.

What Are Crawlability Issues?

Crawlability issues are points that stop serps from accessing your web site pages.

When serps akin to Google crawl your website, they use automated bots to learn and analyze your pages.

If there are crawlability issues, these bots could encounter obstacles that hinder their skill to correctly entry your pages.

Widespread crawlability issues embrace:

- Nofollow hyperlinks

- Redirect loops

- Unhealthy website construction

- Gradual website velocity

How Do Crawlability Points Have an effect on search engine marketing?

Crawlability issues can drastically have an effect on your search engine marketing recreation.

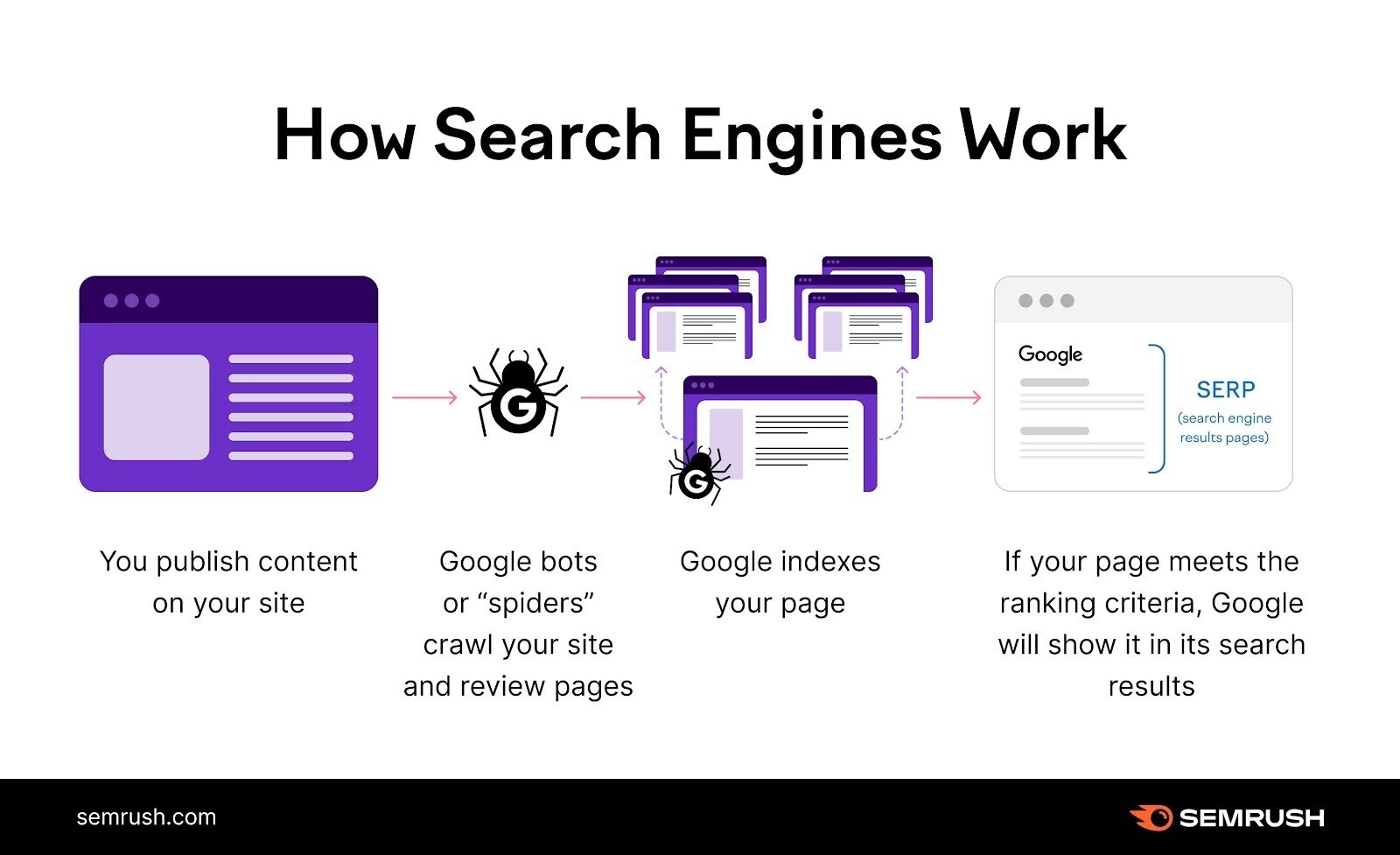

Engines like google act like explorers once they crawl your web site, looking for as a lot content material as attainable.

But when your website has crawlability issues, some (or all) pages are virtually invisible to serps.

They’ll’t discover them. Which implies they’ll’t index them—i.e., save them to show in search outcomes.

This implies lack of potential search engine (natural) site visitors and conversions.

Your pages have to be each crawable and indexable with a view to rank in serps.

11 Crawlability Issues & Methods to Repair Them

1. Pages Blocked In Robots.txt

Engines like google first take a look at your robots.txt file. This tells them which pages they’ll and can’t crawl.

In case your robots.txt file seems like this, it means your whole web site is blocked from crawling:

Person-agent: *

Disallow: /

Fixing this drawback is straightforward. Exchange the “disallow” directive with “permit.” Which ought to permit serps to entry your whole web site.

Person-agent: *

Permit: /

In different circumstances, solely sure pages or sections are blocked. For example:

Person-agent: *

Disallow: /merchandise/

Right here, all of the pages within the “merchandise” subfolder are blocked from crawling.

Remedy this drawback by eradicating the subfolder or web page specified. Engines like google ignore the empty “disallow” directive.

Person-agent: *

Disallow:

Alternatively, you may use the “permit” directive as an alternative of “disallow” to instruct serps to crawl your whole website. Like this:

Person-agent: *

Permit: /

Word: It’s widespread apply to dam sure pages in your robots.txt that you simply don’t wish to rank in serps, akin to admin and “thanks” pages. It’s a crawlability drawback solely whenever you block pages meant to be seen in search outcomes.

2. Nofollow Hyperlinks

The nofollow tag tells serps to not crawl the hyperlinks on a webpage.

The tag seems like this:

<meta title="robots" content material="nofollow">

If this tag is current in your pages, the hyperlinks inside could not usually get crawled.

This creates crawlability issues in your website.

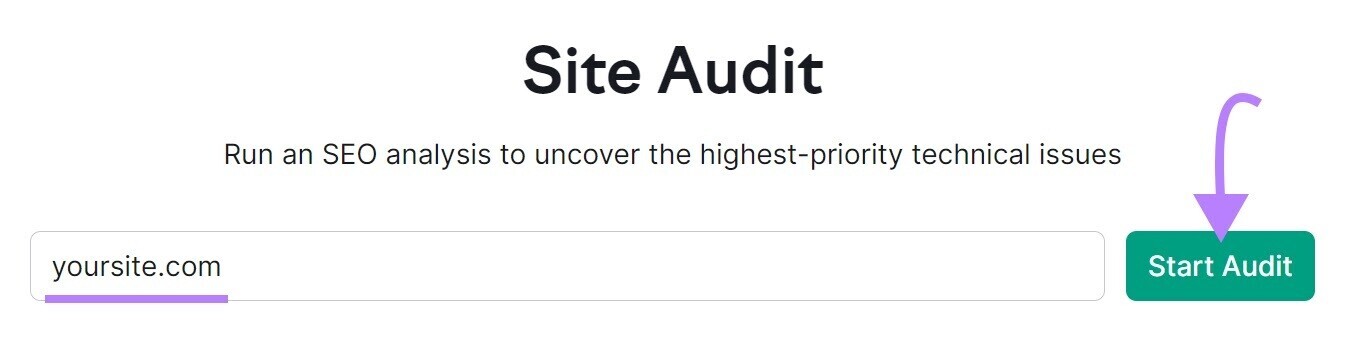

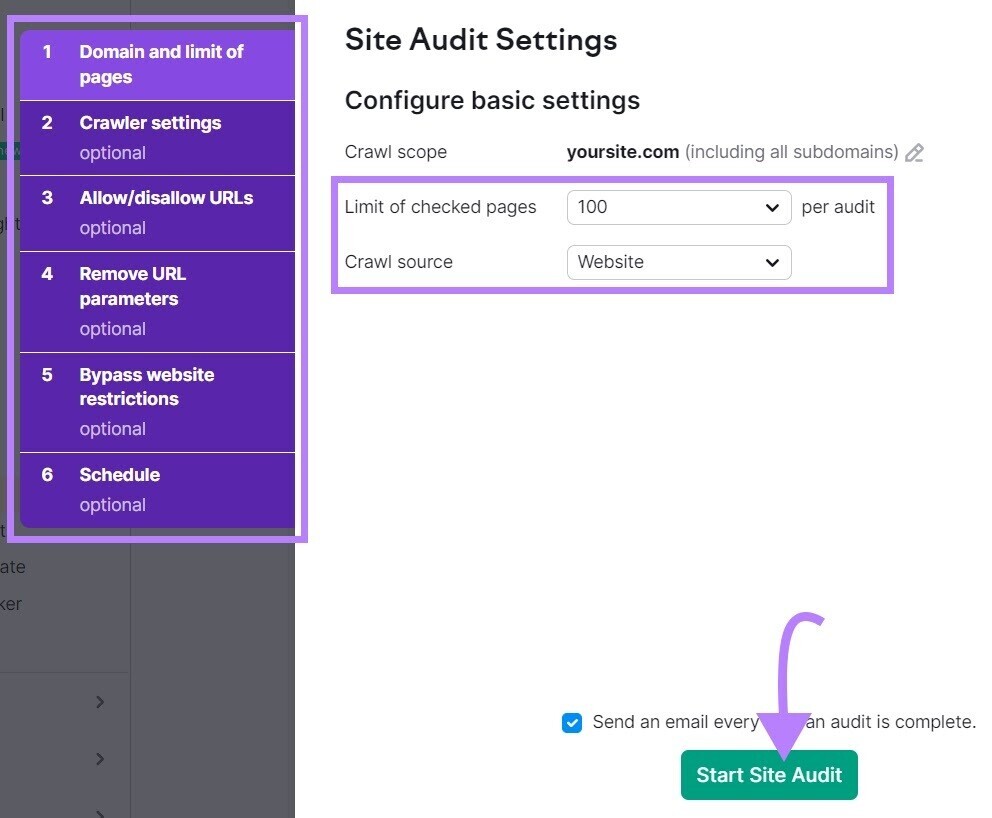

Scan your web site with Semrush’s Web site Audit device to verify for nofollow hyperlinks.

Open the device, enter your web site, and click on “Begin Audit.”

The “Web site Audit Settings” window will seem.

From right here, configure the fundamental settings and click on “Begin Web site Audit.”

As soon as the audit is full, navigate to the “Points” tab and seek for “nofollow.”

To see whether or not there are nofollow hyperlinks detected in your website.

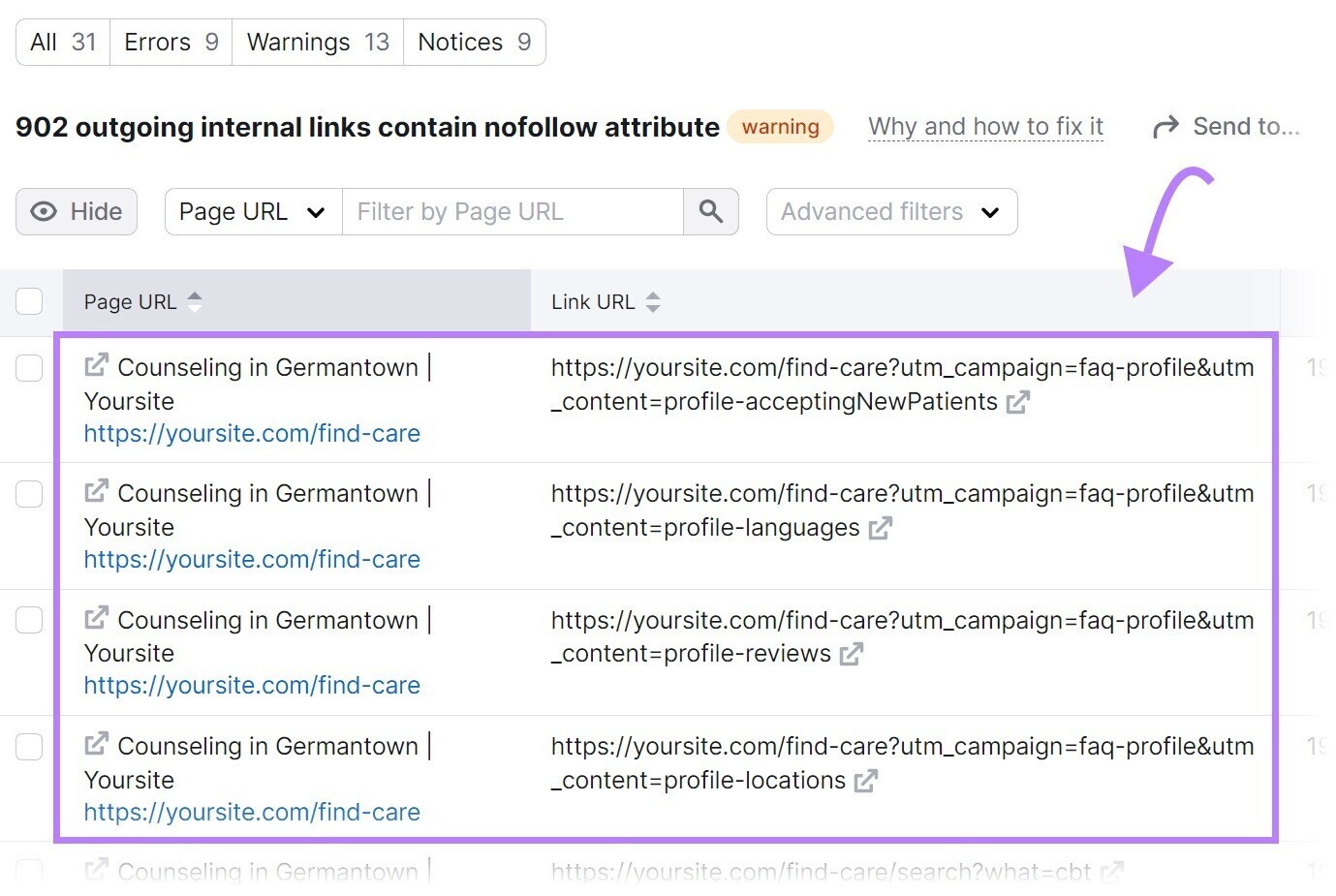

If nofollow hyperlinks are detected, click on “XXX outgoing inside hyperlinks include nofollow attribute” to view an inventory of pages which have a nofollow tag.

Evaluation the pages and take away the nofollow tags in the event that they shouldn’t be there.

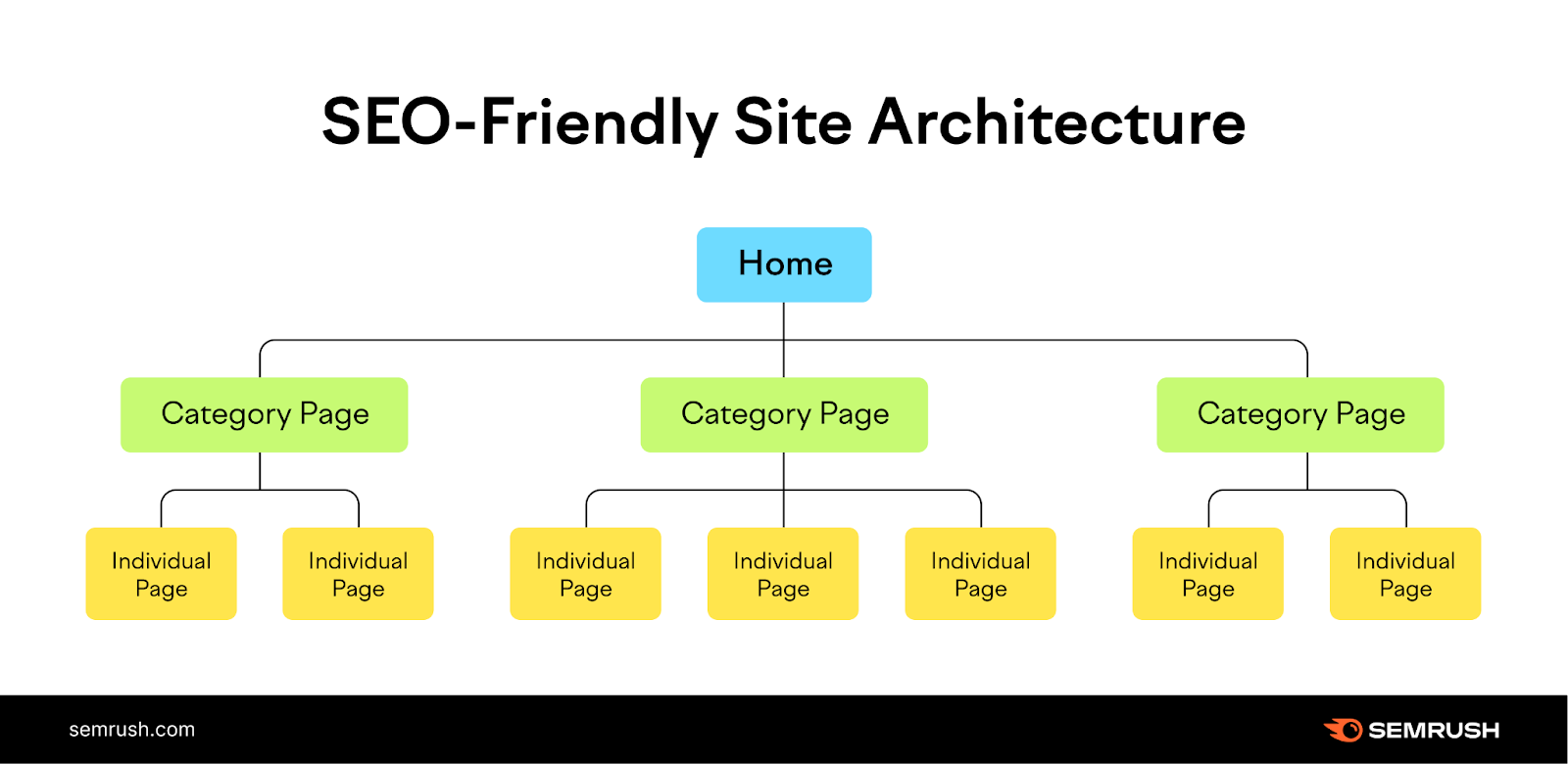

3. Unhealthy Web site Structure

Web site structure is how your pages are organized.

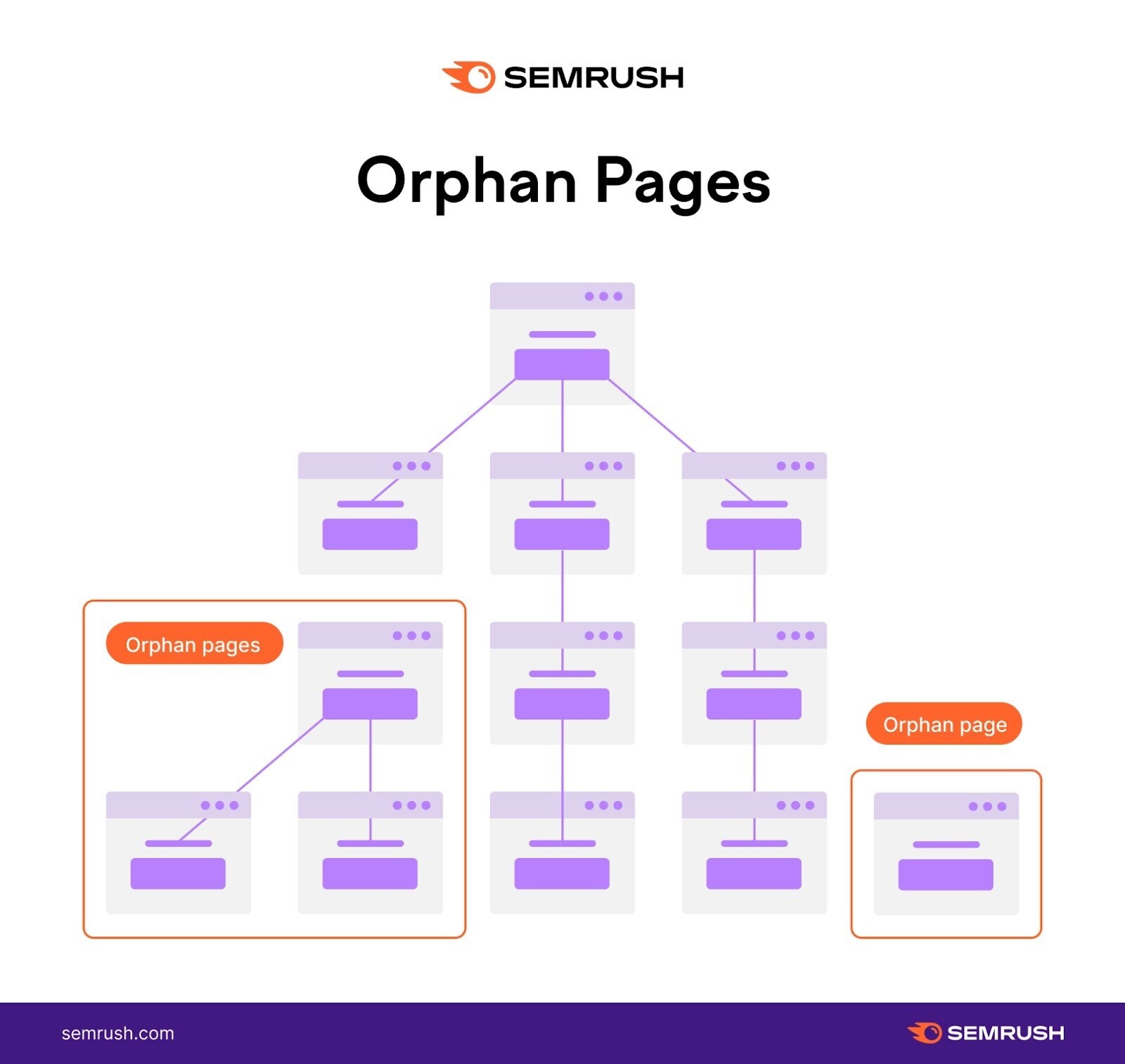

A strong website structure ensures each web page is just some clicks away from the homepage and there are not any orphan pages (i.e., pages with no inside hyperlinks pointing to them). Websites with robust website structure guarantee serps can simply entry all pages.

Unhealthy website website structure can create crawlability points. Discover the instance website construction depicted beneath. It has orphan pages.

There isn’t a linked path for serps to entry these pages from the homepage. So they could go unnoticed when serps crawl the positioning.

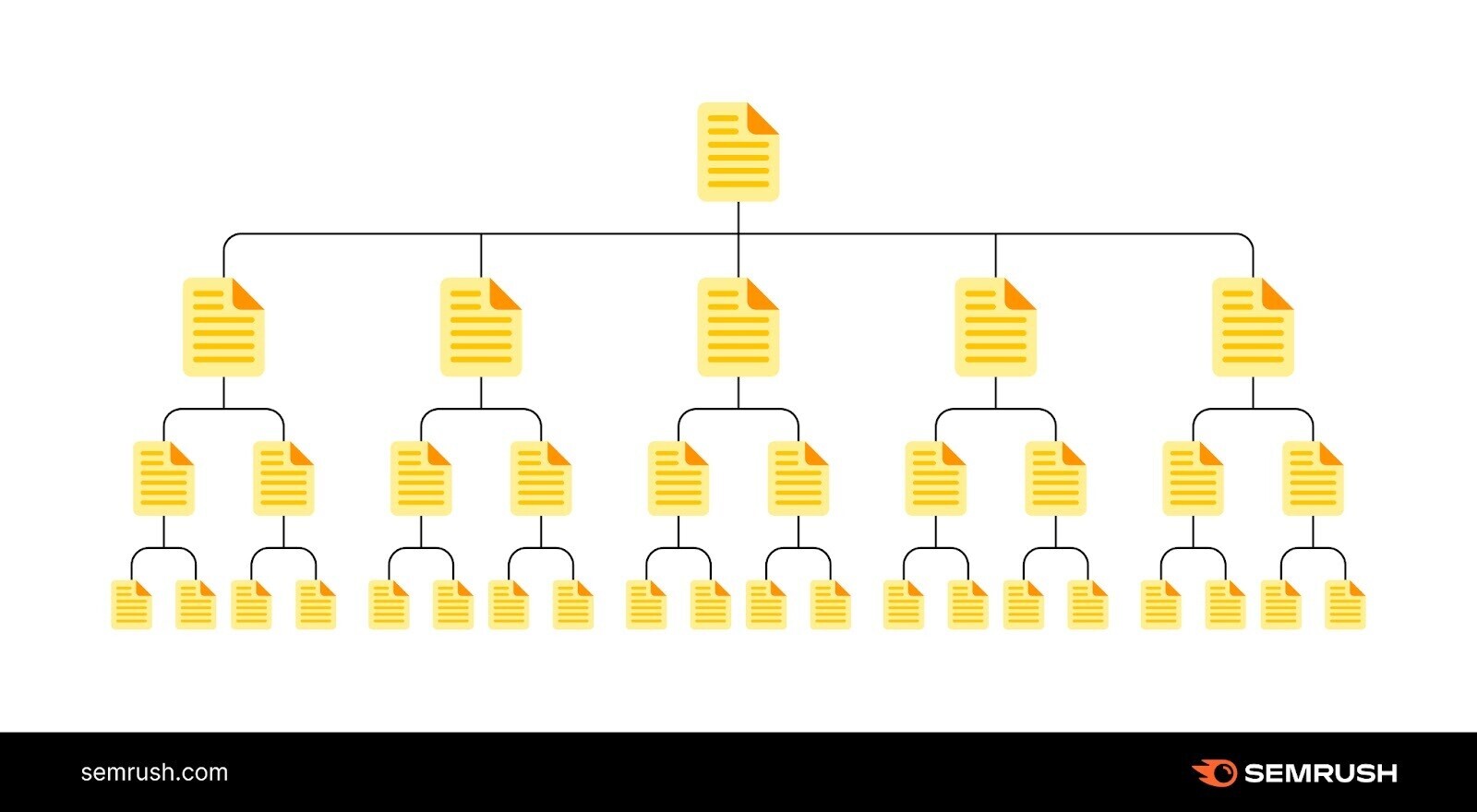

The answer is simple: Create a website construction that logically organizes your pages in a hierarchy with inside hyperlinks.

Like this:

Within the instance above, the homepage hyperlinks to classes, which then hyperlink to particular person pages in your website.

And supply a transparent path for crawlers to seek out all of your pages.

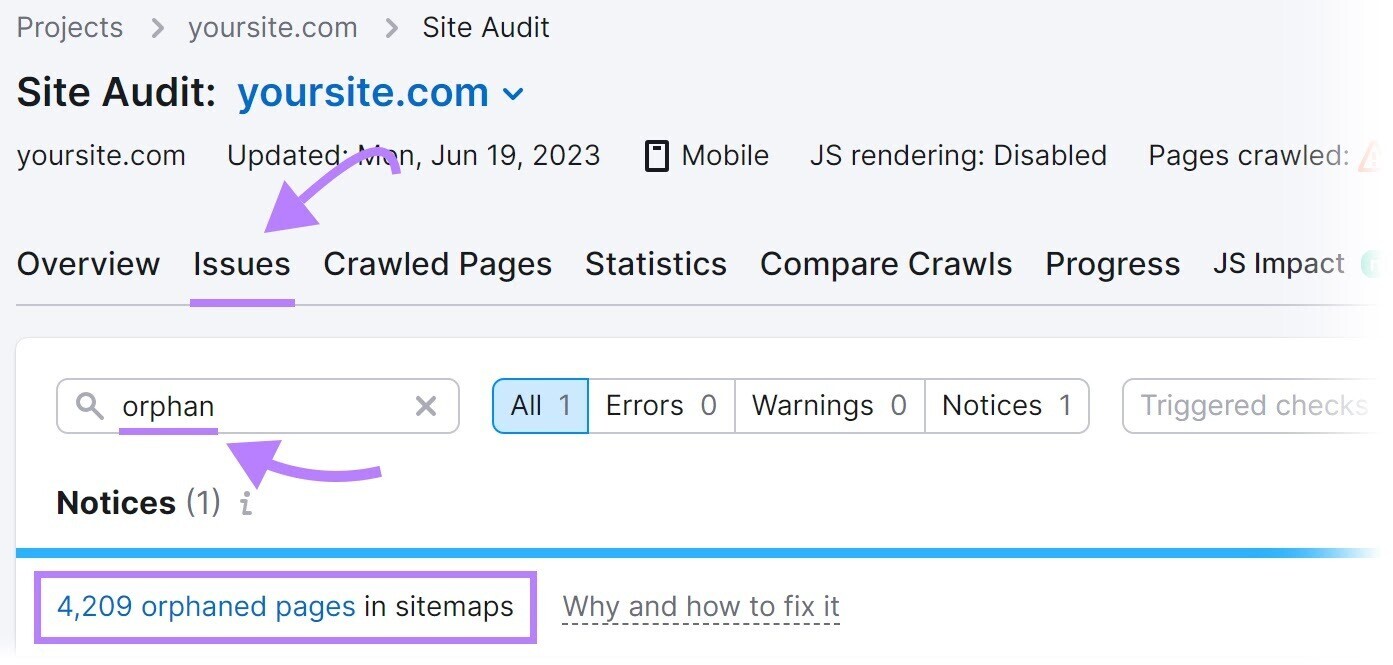

4. Lack of Inner Hyperlinks

Pages with out inside hyperlinks can create crawlability issues.

Engines like google could have bother discovering these pages.

Establish your orphan pages. And add inside hyperlinks to them to keep away from crawlability points.

Discover orphan pages utilizing Semrush’s Web site Audit device.

Configure the device to run your first audit.

As soon as the audit is full full, go to the “Points” tab and seek for “orphan.”

You’ll see whether or not there are any orphan pages current in your website.

To unravel this potential drawback, add inside hyperlinks to orphan pages from related pages in your website.

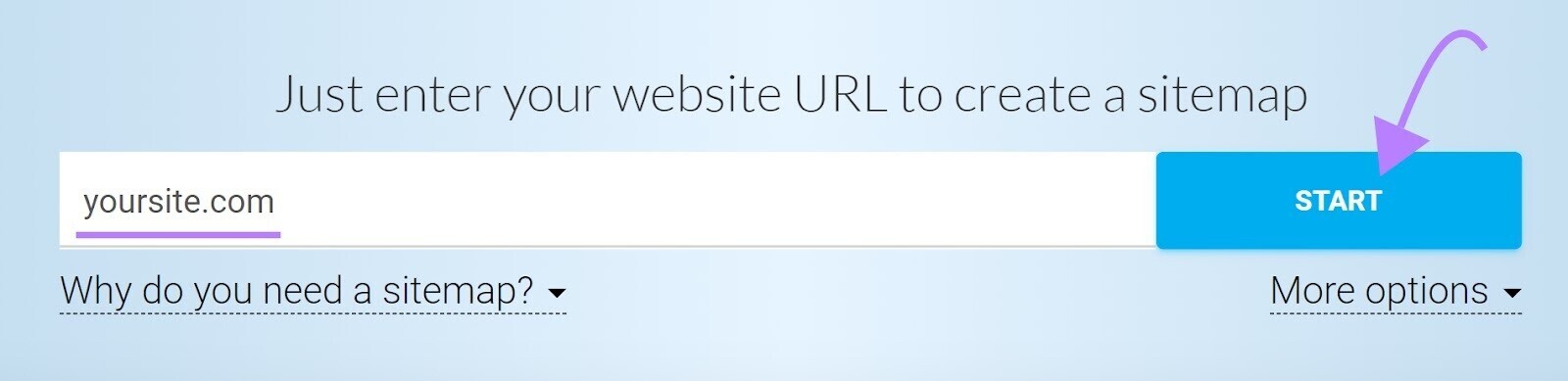

5. Unhealthy Sitemap Administration

A sitemap offers an inventory of pages in your website that you really want serps to crawl, index, and rank.

In case your sitemap excludes pages meant to be crawled, they may go unnoticed. And create crawlability points.

Remedy by recreating a sitemap that features all of the pages meant to be crawled.

A device akin to XML Sitemaps may also help.

Enter your web site URL, and the device will generate a sitemap for you robotically.

Then, save the file as “sitemap.xml” and add it to the basis listing of your web site.

For instance, in case your web site is www.instance.com, then your sitemap URL ought to be accessed at www.instance.com/sitemap.xml.

Lastly, submit your sitemap to Google in your Google Search Console account.

Click on “Sitemaps” within the left-hand menu. Enter your sitemap URL and click on “Submit.”

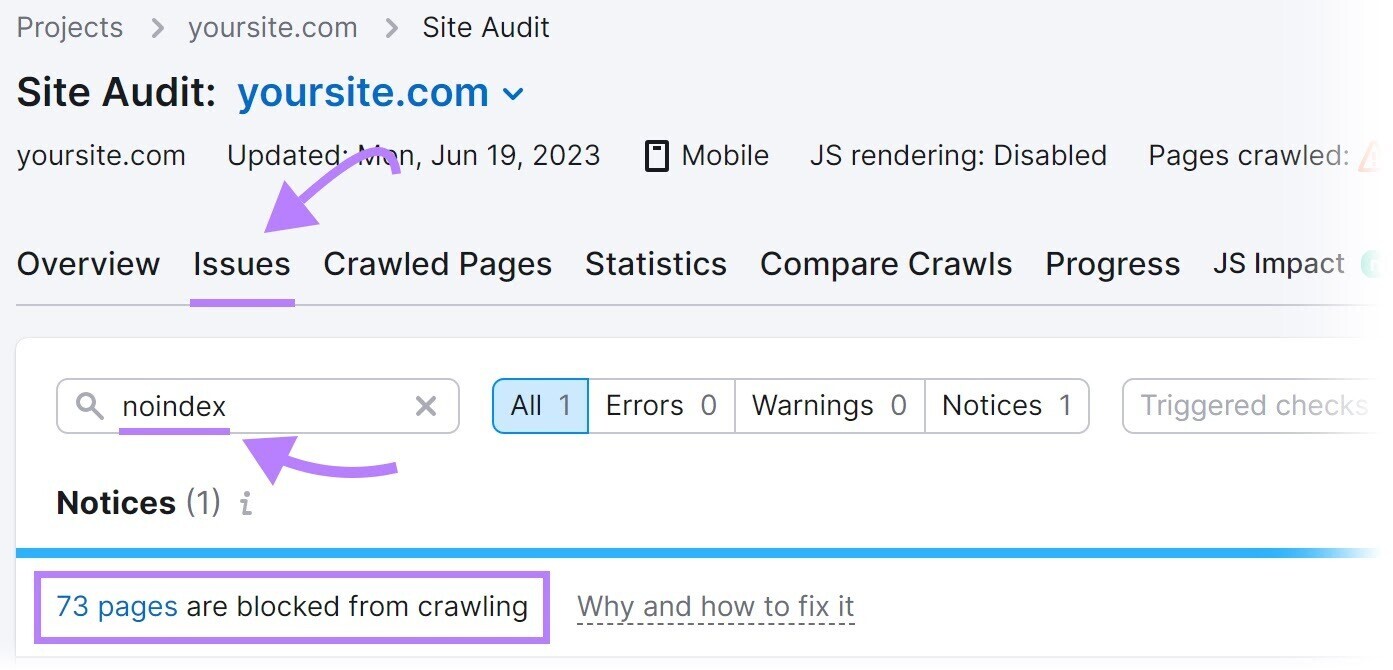

6. ‘Noindex’ Tags

A “noindex” meta robots tag instructs serps to not index the web page.

The tag seems like this:

<meta title="robots" content material="noindex">

Though the “noindex” tag is meant to regulate indexing, it may possibly create crawlability points if you happen to go away it in your pages for a very long time.

Google treats long-term “noindex” tags as “nofollow,” as confirmed by Google’s John Muller.

Over time, Google will cease crawling the hyperlinks on these pages altogether.

So, in case your pages will not be getting crawled, long-term “noindex” tags may very well be the wrongdoer.

Establish pages with a “noindex” tag utilizing Semrush’s Web site Audit device.

Arrange a mission within the device and run your first crawl.

As soon as the crawl is full, head over to the “Points” tab and seek for “noindex.”

The device will record pages in your website with a “noindex” tag.

Evaluation the pages and take away the “noindex” tag the place applicable.

Word: Having “noindex” tag on some pages—pay-per-click (PPC) touchdown pages and “thanks” pages, for instance—is widespread apply to maintain them out of Google’s index. It’s an issue solely whenever you noindex pages meant to rank in serps. Take away the “noindex” tag on these pages to keep away from indexability and crawlability points.

7. Gradual Web site Pace

Web site velocity is how shortly your website masses. Gradual website velocity can negatively impression crawlability.

When search engine bots go to your website, they’ve restricted time to crawl—generally known as a crawl price range.

Gradual website velocity means it takes longer for pages to load. And reduces the variety of pages bots can crawl inside that crawl session.

Which implies vital pages may very well be excluded from crawling.

Work to resolve this drawback by bettering your general web site efficiency and velocity.

Begin with our information to web page velocity optimization.

8. Inner Damaged Hyperlinks

Damaged hyperlinks are hyperlinks that time to useless pages in your website.

They return a “404 error” like this:

Damaged hyperlinks can have a major impression on web site crawlability.

Search engine bots comply with hyperlinks to find and crawl extra pages in your web site.

A damaged hyperlink acts as a useless finish and prevents search engine bots from accessing the linked web page.

This interruption can hinder the thorough crawling of your web site.

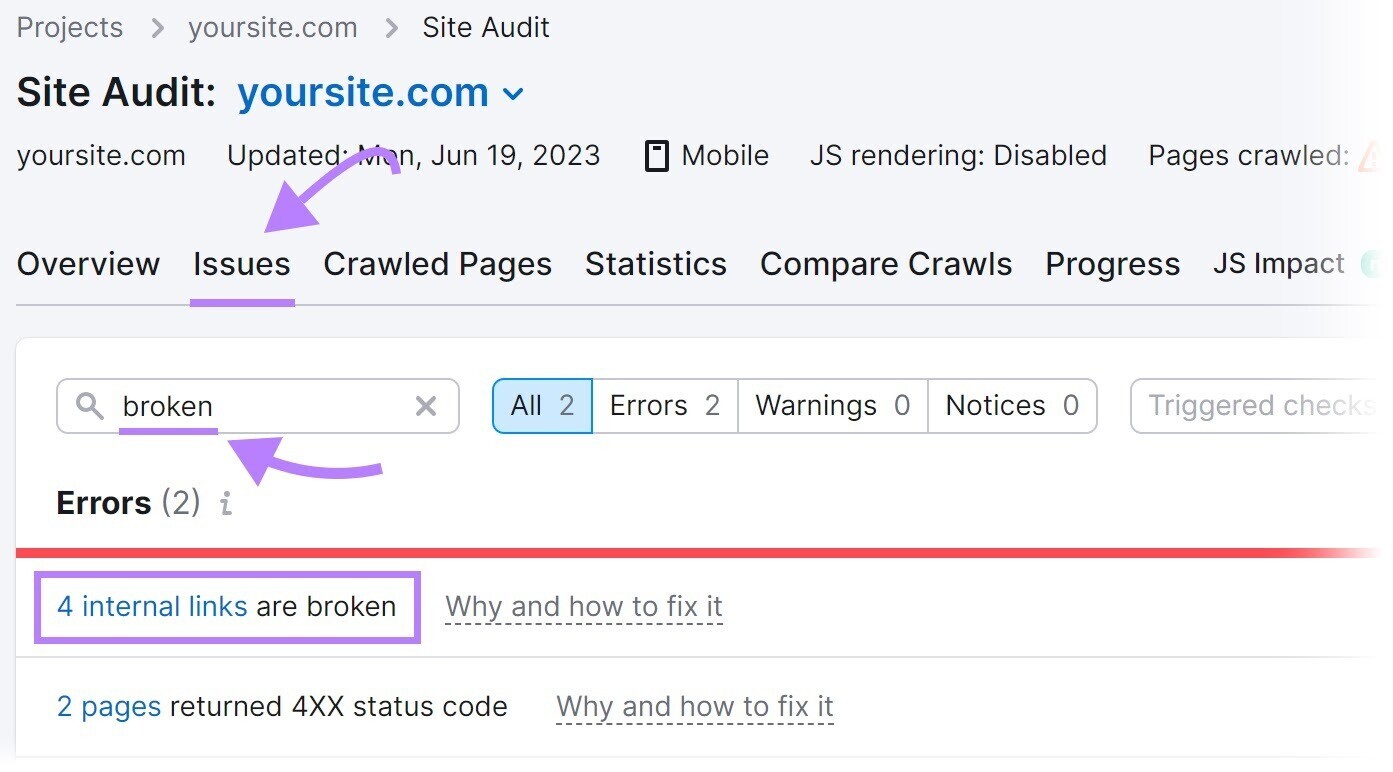

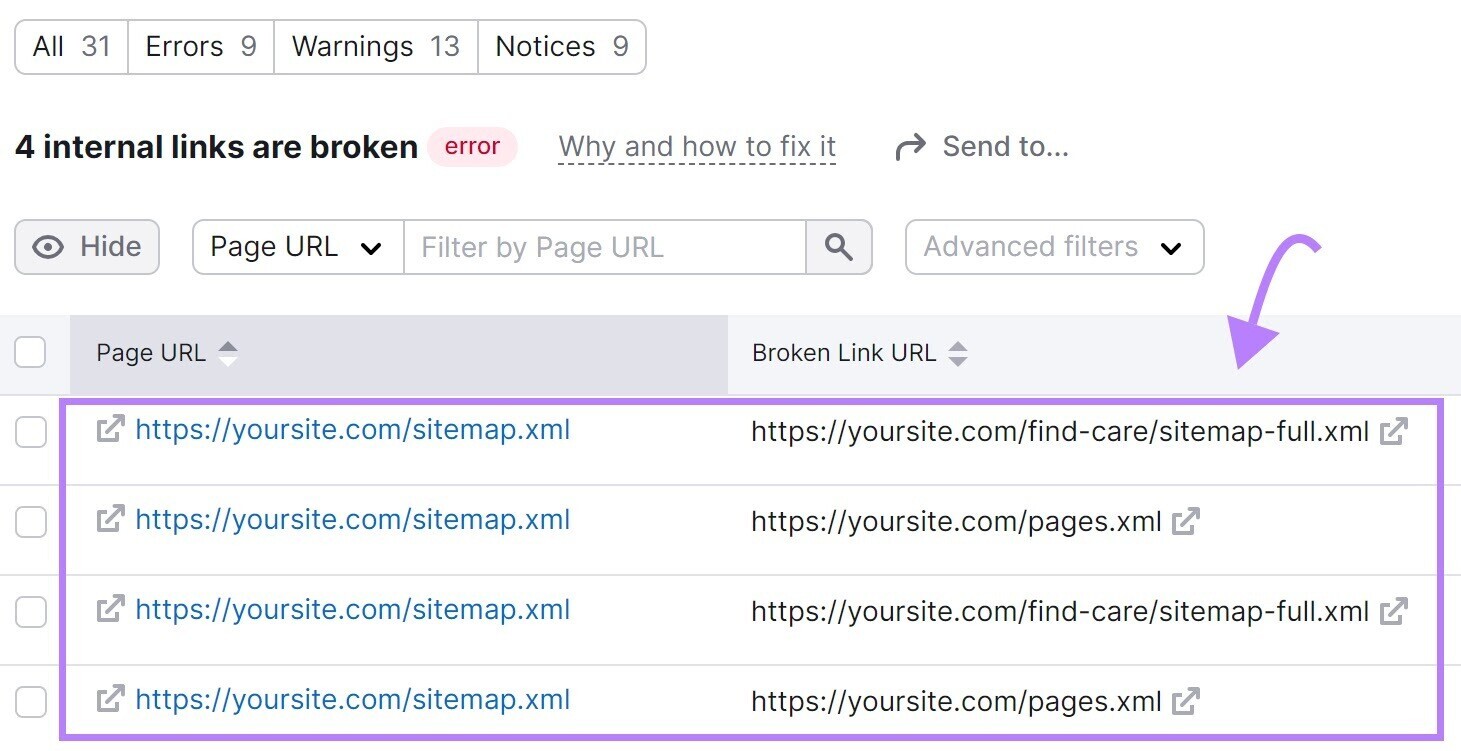

To seek out damaged hyperlinks in your website, use the Web site Audit device.

Navigate to the “Points” tab and seek for “damaged.”

Subsequent, click on “# inside hyperlinks are damaged.” You’ll see a report itemizing all of your damaged hyperlinks.

To repair damaged hyperlinks, change the hyperlink, restore the lacking web page, or add a 301 redirect to a different related web page in your website.

9. Server-Facet Errors

Server-side errors, akin to a 500 HTTP standing code, disrupt the crawling course of.

Server-side errors point out that the server could not fulfill the request, which makes it troublesome for bots to entry and crawl your web site’s content material.

Often monitor your web site’s server well being to determine and clear up for server-side errors.

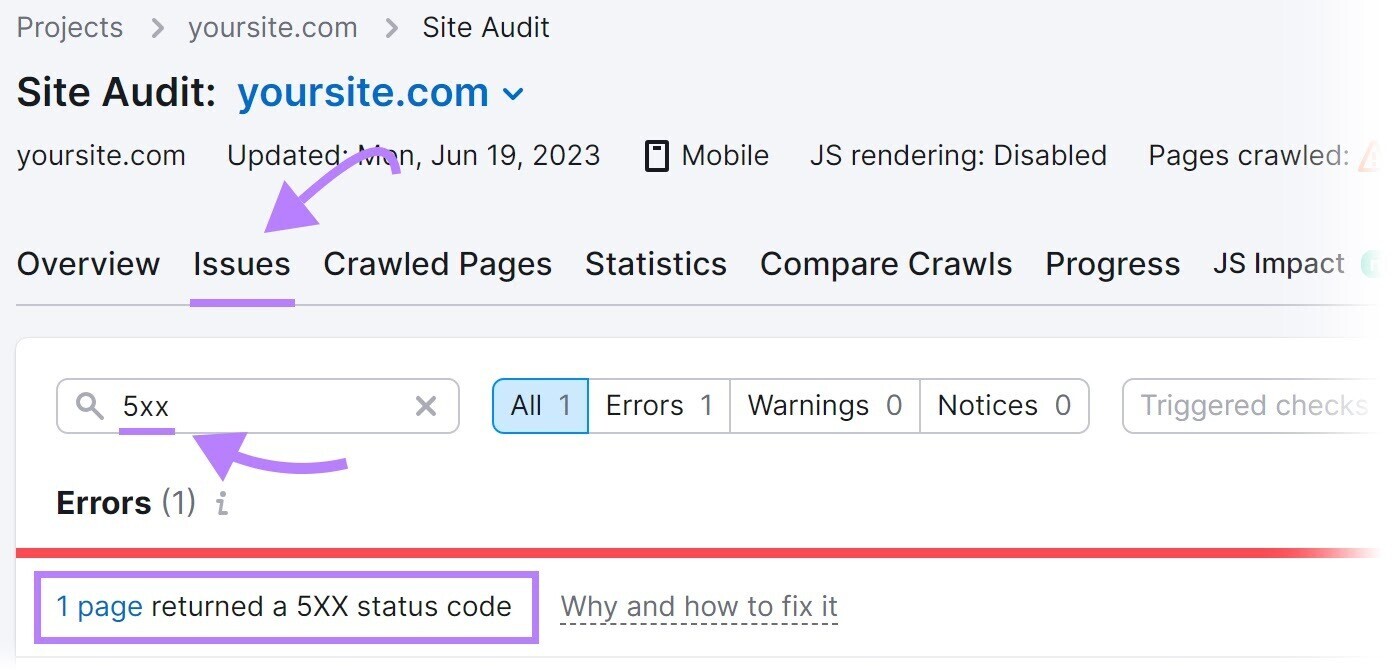

Semrush’s Web site Audit device may also help.

Seek for “5xx” within the “Points” tab to verify for server-side errors.

If errors are current, click on “# pages returned a 5XX standing code” to view an entire record of affected pages.

Then, ship this record to your developer to configure the server correctly.

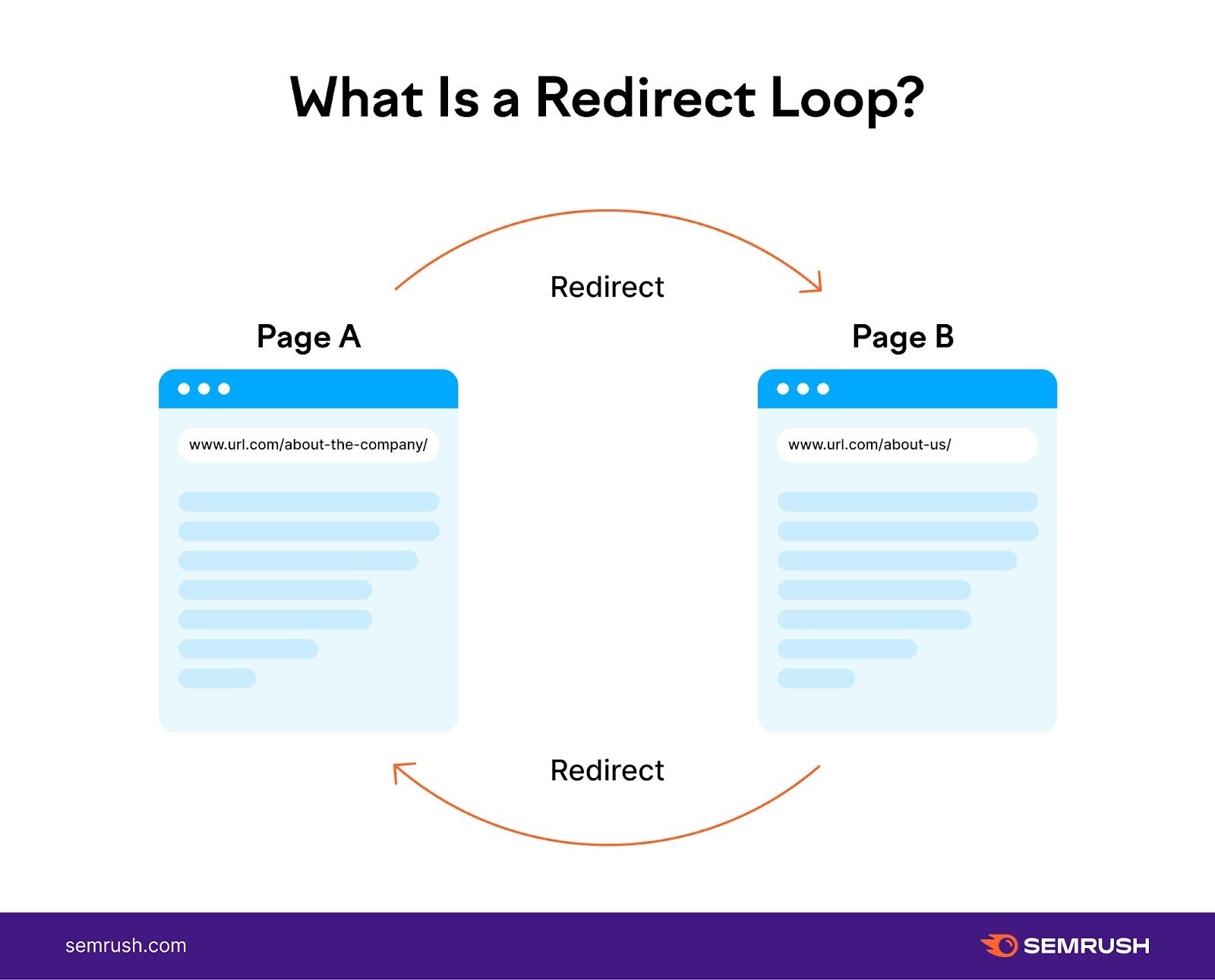

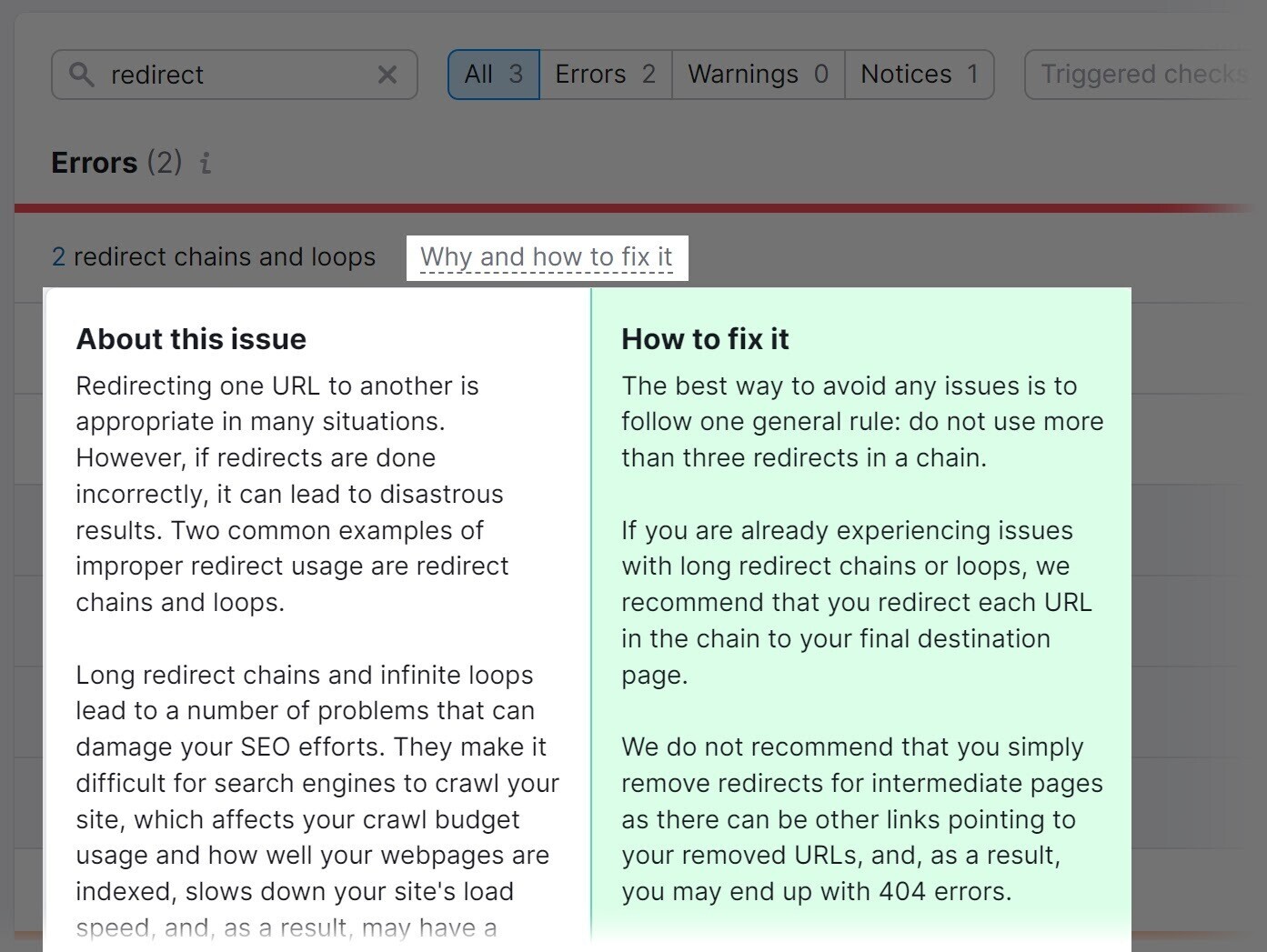

10. Redirect Loops

A redirect loop is when one web page redirects to a different, which in flip redirects again to the unique web page, forming a steady loop.

Redirect loops lure search engine bots in an limitless cycle of redirects between two (or extra) pages.

Bots proceed following redirects with out reaching the ultimate vacation spot—losing essential crawl price range time that may very well be spent on vital pages.

Remedy by figuring out and fixing redirect loops in your website.

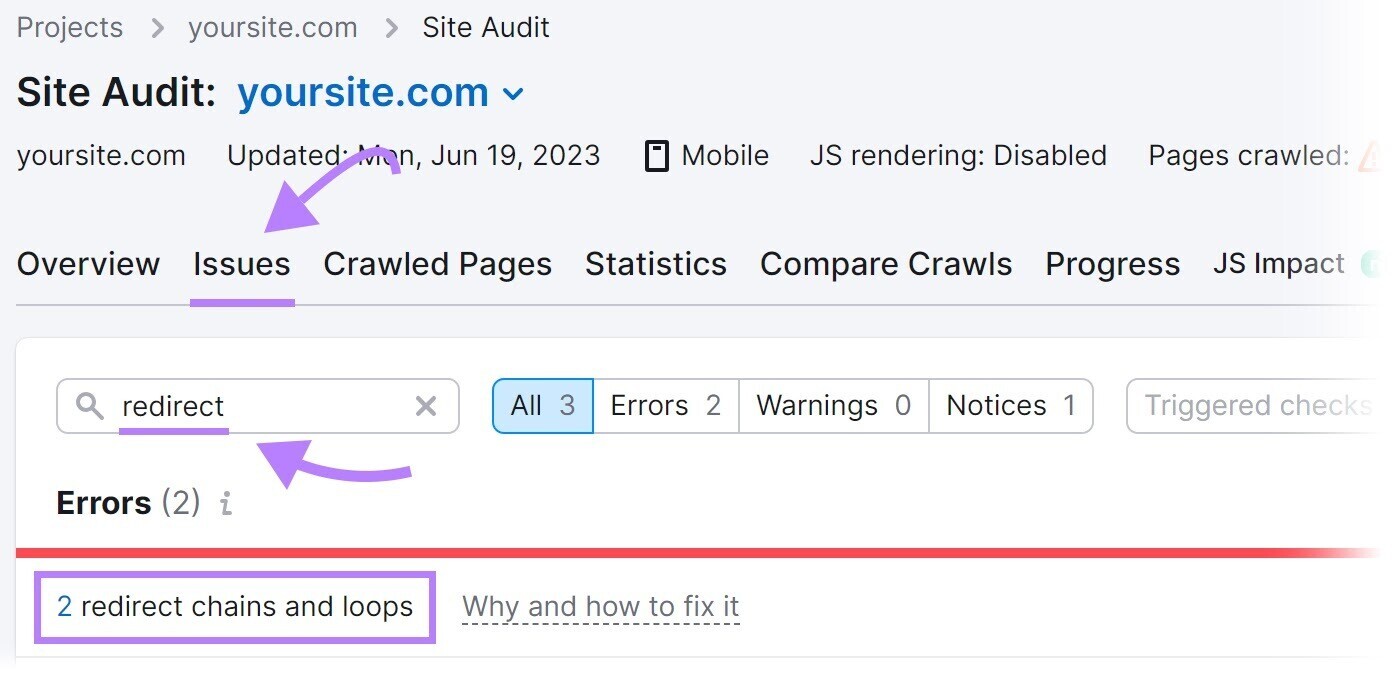

The Web site Audit device may also help.

Seek for “redirect” within the “Points” tab.

The device will show redirect loops and supply recommendation on easy methods to repair them.

11. Entry Restrictions

Pages with entry restrictions, akin to these behind login kinds or paywalls, can stop search engine bots from crawling and indexing these pages.

Because of this, these pages could not seem in search outcomes, limiting their visibility to customers.

It is smart to have sure pages restricted. For instance, membership-based web sites or subscription platforms typically have restricted pages which can be accessible solely to paying members or registered customers.

This permits the positioning to offer unique content material, particular provides, or customized experiences. To create a way of worth and incentivize customers to subscribe or grow to be members.

But when important parts of your web site are restricted, that’s a crawlability mistake.

Assess the need of restricted entry for every web page. Hold restrictions on pages that really require them. Take away restrictions on others.

Rid Your Web site of Crawlability Points

Crawlability points have an effect on your search engine marketing efficiency.

Semrush’s Web site Audit device is a one-stop resolution for detecting and fixing points that have an effect on crawlability.

Enroll free of charge to get began.