Parameter-efficient fine-tuning or PeFT strategies search to adapt massive language fashions by way of updates to a small variety of weights. Nonetheless, a majority of current interpretability work has demonstrated that representations encode semantic wealthy info, suggesting that it is perhaps a greater and extra highly effective various to edit these representations. Pre-trained massive fashions are sometimes nice tuned for use for brand spanking new domains or duties, and through the fine-tuning course of, a single base mannequin could be tailored to all kinds of duties even with solely small quantities of in-domain knowledge out there to the mannequin. Nonetheless, the method of fine-tuning a complete mannequin is resource-consuming, and costly, particularly for language fashions with a considerably greater variety of dimension and parameters.

Parameter-efficient fine-tuning or PeFT strategies suggest to deal with the excessive prices related to fine-tuning the entire mannequin by updating solely a small quantity of the full weights out there, a course of that helps in lowering coaching time together with reminiscence utilization. What’s extra necessary is that Parameter-efficient fine-tuning or PeFT strategies have demonstrated comparable efficiency to finetune in a number of sensible settings. Adapters, a standard household of Parameter-efficient fine-tuning or PeFT strategies, be taught an edit that may be added to a further set of weights that function alongside the frozen base mannequin, with current adapters like LoRA scale back the variety of trainable parameters in realized weight updates through the use of low-rank approximations as a substitute of full-weight matrices when coaching the adapters.

With earlier works demonstrating modifying representations is perhaps a greater various to Parameter-efficient fine-tuning or PeFT strategies, on this article, we can be speaking about Illustration Superb-tuning or ReFT strategies that function on a frozen mannequin, and be taught task-specific interventions on hidden representations. This text goals to cowl the ReFt or Illustration Superb-tuning framework in depth, and we discover the mechanism, the methodology, the structure of the framework together with its comparability with state-of-the-art frameworks. So let’s get began.

In an try to undertake pre-trained language fashions to new domains and duties, present frameworks fine-tune these pre-trained language fashions incessantly as with the fine-tuning course of carried out, a single base mannequin could be tailored to a wide range of duties even when working with a small quantity of in-domain knowledge. Though the fine-tuning course of does enhance the general efficiency, it’s an costly course of particularly if the language mannequin has a considerably excessive variety of parameters. To deal with this difficulty, and scale back the related prices, PeFT or Parameter-efficient fine-tuning frameworks replace solely a small fraction of the full weights, a course of that not solely reduces the coaching time, but additionally reduces the reminiscence utilization, permitting the PeFT frameworks to realize comparable efficiency when in comparison with full fine-tuning approaches in sensible situations. Adapters, a standard household of PeFTs, work by studying an edit that may be added to a further set of weights together with a subset of weights that function in unison with the bottom mannequin with frozen weights. Current adapter frameworks like LoRA and QLoRA have demonstrated that it’s doable to coach full-precision adapters on high of lowered precision fashions with out affecting efficiency. Adapters are normally extra environment friendly and efficient compared towards different strategies that introduce new mannequin elements.

A serious spotlight of present state-of-the-art Parameter-efficient fine-tuning frameworks is that as a substitute of modifying representations, they modify weights. Nonetheless, frameworks coping with interpretability have demonstrated that representations encode wealthy semantic info, suggesting that representations modifying is perhaps a greater and a extra highly effective method when in comparison with weight updates. This assumption of representations modifying being the higher method is what kinds the inspiration of ReFT or Illustration Superb-tuning framework that trains interventions as a substitute of adapting mannequin weights, permitting the mannequin to govern a small fraction of all of the representations in an try to steer mannequin behaviors to unravel downstream duties throughout inference. ReFT or Illustration Superb-tuning strategies are drop-in replacements for weight-based PeFT or Parameter-efficient fine-tuning frameworks. The ReFT method attracts inspiration from current fashions working with massive mannequin interpretability that intervenes on representations to search out devoted causal mechanisms, and steers the conduct of the mannequin throughout inference, and subsequently could be seen as a generalization of the representation-editing fashions. Constructing on the identical, LoReFT or Low-Rank Subspace ReFT is a robust and efficient occasion of ReFT, and is a parameterization of ReFT that intervenes on hidden representations within the linear area spanned by low-rank projection matrix, and builds straight on the DAS or Distributed Alignment Search framework.

Shifting alongside, opposite to full fine-tuning, the PeFT or Parameter-efficient fine-tuning framework trains solely a small fraction of the parameters of the mannequin, and manages to adapt the mannequin to downstream duties. The Parameter-efficient fine-tuning framework could be labeled into three major classes:

- Adapter-based strategies: Adapter-based strategies prepare further modules like fully-connected layers on high of the pre-trained mannequin with frozen weights. Sequence adapters insert elements between the multilayer perceptron or MLP and LM or massive mannequin consideration layers, whereas parallel adapters add modules alongside current elements. Since adapters add new elements that may not be folded into current mannequin weights simply, they pose a further burden throughout inference.

- LoRA: LoRA together with its current variants approximate additive weights throughout coaching through the use of low-rank matrices, and they don’t require further overheads throughout inference because the weight updates could be merged into the mannequin, and it’s the rationale why they’re thought-about to be the present strongest PeFT frameworks.

- Immediate-based strategies: Immediate-based strategies add delicate tokens which can be initialized randomly into the enter, and prepare their embeddings whereas retaining the weights of the language mannequin frozen. The efficiency provided by these strategies are sometimes not passable compared towards different PeFT approaches, they usually additionally carry a major inference overhead value.

As an alternative of updating the weights, the ReFT framework learns interventions to switch a small fraction of the full representations. Moreover, current works on illustration engineering and activation steering have demonstrated that including fastened steering vectors to the residual stream may facilitate a level of management over pre-trained massive mannequin generations with out requiring resource-intensive fine-tuning. Different frameworks have demonstrated that modifying representations with a realized scaling and translation operation can try to match however not surpass the efficiency provided by LoRA adapters on a wide selection of duties with fewer realized parameters. Moreover, the success of those frameworks throughout a spread of duties have demonstrated that representations launched by pre-trained language fashions carry wealthy semantics, though the efficiency of those fashions is sub-optimal, leading to PeFTs to proceed because the state-of-the-art method with no further inference burden.

ReFT : Methodology and Structure

To maintain the model preservation course of easy, the ReFT framework assumes a transformer-based massive mannequin as its goal mannequin that’s able to producing contextualized illustration of sequence of tokens. For a given sequence with n variety of enter tokens, the ReFT framework first embeds these enter tokens into a listing of representations following which the m layers compute the record of hidden representations successively as a operate of the earlier record of hidden representations. Every hidden illustration is a vector, and the language mannequin makes use of the ultimate hidden representations to provide the predictions. The ReFT framework considers each masked language fashions and autoregressive language fashions. Now, based on the linear illustration speculation, in neural networks, ideas are encoded inside the linear subspaces of representations. Current fashions have discovered this declare to be true in neural community fashions educated on pure language together with different enter distributions.

Moreover, in interpretability research, the informal abstraction framework makes use of interchange interventions to determine the position of neural community elements casually when implementing explicit behaviors. The logic behind interchange intervention is that if one fixes a illustration to what it will have been for a counterfactual enter, and this intervention impacts the output of the mannequin constantly in the best way that the claims made by the ReFT framework concerning the element answerable for producing that illustration, then the element performs a causal position within the conduct. Though there are a couple of strategies, distributed interchange intervention is the perfect method to check whether or not an idea is encoded in a linear subspace of a illustration, as claimed by the linear illustration speculation. Moreover, the DAS technique has been used beforehand to search out linear illustration in language fashions of entity attributes, sentiment, linguistic options, and mathematical reasoning. Nonetheless, a number of experiments have indicated that the DAS technique is extremely expressive, and it possesses the flexibility to search out causal efficacious subspaces even when the transformer language mannequin has been initialized randomly, and subsequently is but to be taught any task-specific representations, ensuing within the debate whether or not DAS is efficient and accountable sufficient for interpretability duties.

The expressivity provided by DAS means that the method could possibly be an excellent device to regulate the conduct of the language mannequin together with its work on controllable era and accountable modifying. Due to this fact, to adapt language fashions for downstream duties, the ReFT framework makes use of the distributed interchange intervention operation to make a brand new parameter environment friendly technique. Moreover, the ReFT technique is a set of interventions, and the framework enforces that for any two interventions that function on the identical layer, the intervention positions have to be disjoint, with the parameters of all intervention features remaining unbiased. Because of this, the ReFT is a generic framework that encompasses interventions on hidden representations through the mannequin ahead move.

ReFT: Experiments and Outcomes

To guage its efficiency towards current PEFT frameworks, the ReFT framework conducts experiments throughout 4 numerous pure language processing benchmarks, and covers over 20 datasets, with the first aim being to offer a wealthy image of how the LoReFT framework performs in numerous situations. Moreover, when the LoReFT framework is carried out in actual life, builders have to determine on what number of interventions to be taught together with the enter positions and layers to use each on. To finish the duty, the ReFT framework tunes 4 hyperparameters.

- The variety of prefix positions to intervene on.

- The variety of suffix positions to intervene on.

- What set of layers to intervene on.

- Whether or not or to not tie intervention parameters throughout totally different positions in the identical layer.

By doing this, the ReFT framework simplifies the hyperparameter search area, and ensures solely a set further inference value that doesn’t scale with the size of the immediate.

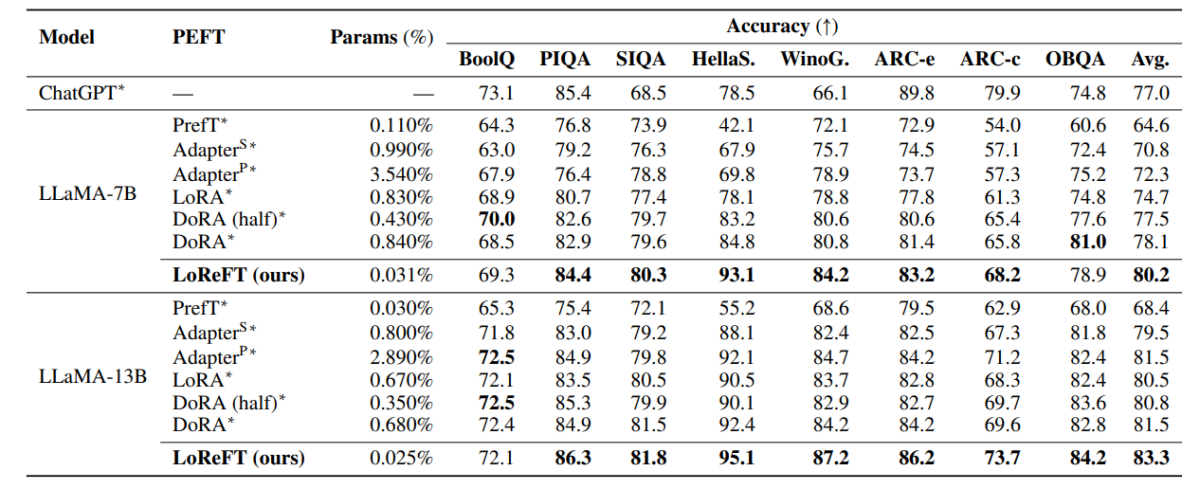

The above desk compares the accuracy of the LLaMA-7B and LLaMA-13B frameworks towards current PEFT fashions throughout 8 commonsense reasoning dataset. As it may be noticed, the LoReFT mannequin outperforms current PEFT approaches by an honest margin, regardless of having a lot fewer parameters, with the common efficiency of three runs being reported with distinct parameter seeds for the LoReFT mannequin. The param(%) is calculated by dividing the variety of trainable parameters with the variety of complete parameters of the bottom massive mannequin.

The above desk summarizes the accuracy comparability of the LLaMA-7B and LLaMA-13B frameworks towards current PEFT fashions throughout 4 totally different arithmetic reasoning datasets, with the framework reporting the common efficiency of three runs with distinct random seeds. As it may be noticed, regardless of having a lot fewer params(%), the LoReFT framework outperforms current PEFT frameworks by a substantial margin.

The above desk summarizes the accuracy comparability of the RoBERTa-base and RoBERTa-large frameworks towards current PEFT fashions throughout the GLUE benchmark, with the framework reporting the common efficiency of 5 runs with distinct random seeds. As it may be noticed, regardless of having a lot fewer params(%), the LoReFT framework outperforms current PEFT frameworks by a substantial margin.

Ultimate Ideas

On this article, now we have talked about LoReFT, a robust various to current PEFT frameworks that achieves robust efficiency throughout benchmarks from 4 totally different domains whereas providing as much as 50 occasions the effectivity provided by earlier state-of-the-art PEFT fashions. Pre-trained massive fashions are sometimes nice tuned for use for brand spanking new domains or duties, and through the fine-tuning course of, a single base mannequin could be tailored to all kinds of duties even with solely small quantities of in-domain knowledge out there to the mannequin. Nonetheless, the method of fine-tuning a complete mannequin is resource-consuming, and costly, particularly for language fashions with a considerably greater variety of dimension and parameters. Parameter-efficient fine-tuning or PeFT strategies suggest to deal with the excessive prices related to fine-tuning the entire mannequin by updating solely a small quantity of the full weights out there, a course of that helps in lowering coaching time together with reminiscence utilization. Notably, LoReFT establishes new state-of-the-art efficiency on commonsense reasoning, instruction-following, and pure language understanding towards the strongest PEFTs.