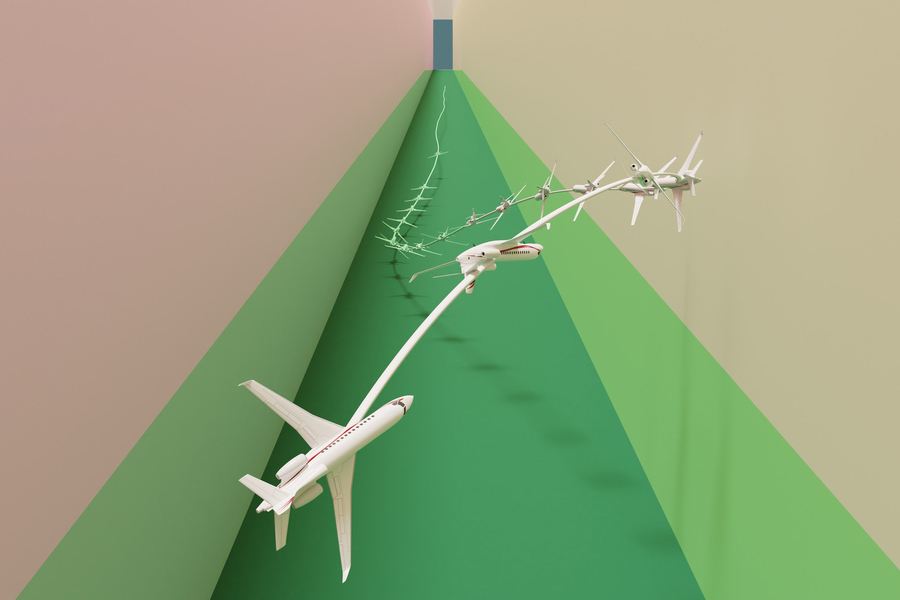

MIT researchers developed a machine-learning method that may autonomously drive a automobile or fly a airplane via a really tough “stabilize-avoid” state of affairs, by which the automobile should stabilize its trajectory to reach at and keep inside some purpose area, whereas avoiding obstacles. Picture: Courtesy of the researchers

By Adam Zewe | MIT Information Workplace

Within the movie “High Gun: Maverick,” Maverick, performed by Tom Cruise, is charged with coaching younger pilots to finish a seemingly unimaginable mission — to fly their jets deep right into a rocky canyon, staying so low to the bottom they can’t be detected by radar, then quickly climb out of the canyon at an excessive angle, avoiding the rock partitions. Spoiler alert: With Maverick’s assist, these human pilots accomplish their mission.

A machine, alternatively, would battle to finish the identical pulse-pounding activity. To an autonomous plane, for example, probably the most simple path towards the goal is in battle with what the machine must do to keep away from colliding with the canyon partitions or staying undetected. Many present AI strategies aren’t in a position to overcome this battle, often known as the stabilize-avoid downside, and could be unable to achieve their purpose safely.

MIT researchers have developed a brand new method that may clear up advanced stabilize-avoid issues higher than different strategies. Their machine-learning method matches or exceeds the protection of present strategies whereas offering a tenfold improve in stability, which means the agent reaches and stays secure inside its purpose area.

In an experiment that might make Maverick proud, their method successfully piloted a simulated jet plane via a slender hall with out crashing into the bottom.

“This has been a longstanding, difficult downside. Lots of people have checked out it however didn’t know how you can deal with such high-dimensional and sophisticated dynamics,” says Chuchu Fan, the Wilson Assistant Professor of Aeronautics and Astronautics, a member of the Laboratory for Info and Choice Techniques (LIDS), and senior writer of a new paper on this system.

Fan is joined by lead writer Oswin So, a graduate scholar. The paper can be introduced on the Robotics: Science and Techniques convention.

The stabilize-avoid problem

Many approaches sort out advanced stabilize-avoid issues by simplifying the system to allow them to clear up it with simple math, however the simplified outcomes usually don’t maintain as much as real-world dynamics.

More practical strategies use reinforcement studying, a machine-learning methodology the place an agent learns by trial-and-error with a reward for conduct that will get it nearer to a purpose. However there are actually two targets right here — stay secure and keep away from obstacles — and discovering the proper steadiness is tedious.

The MIT researchers broke the issue down into two steps. First, they reframe the stabilize-avoid downside as a constrained optimization downside. On this setup, fixing the optimization permits the agent to achieve and stabilize to its purpose, which means it stays inside a sure area. By making use of constraints, they make sure the agent avoids obstacles, So explains.

Then for the second step, they reformulate that constrained optimization downside right into a mathematical illustration often known as the epigraph kind and clear up it utilizing a deep reinforcement studying algorithm. The epigraph kind lets them bypass the difficulties different strategies face when utilizing reinforcement studying.

“However deep reinforcement studying isn’t designed to unravel the epigraph type of an optimization downside, so we couldn’t simply plug it into our downside. We needed to derive the mathematical expressions that work for our system. As soon as we had these new derivations, we mixed them with some present engineering methods utilized by different strategies,” So says.

No factors for second place

To check their method, they designed plenty of management experiments with completely different preliminary circumstances. As an example, in some simulations, the autonomous agent wants to achieve and keep inside a purpose area whereas making drastic maneuvers to keep away from obstacles which can be on a collision course with it.

This video reveals how the researchers used their method to successfully fly a simulated jet plane in a state of affairs the place it needed to stabilize to a goal close to the bottom whereas sustaining a really low altitude and staying inside a slender flight hall. Courtesy of the researchers.

Compared with a number of baselines, their method was the one one that might stabilize all trajectories whereas sustaining security. To push their methodology even additional, they used it to fly a simulated jet plane in a state of affairs one may see in a “High Gun” film. The jet needed to stabilize to a goal close to the bottom whereas sustaining a really low altitude and staying inside a slender flight hall.

This simulated jet mannequin was open-sourced in 2018 and had been designed by flight management consultants as a testing problem. May researchers create a state of affairs that their controller couldn’t fly? However the mannequin was so sophisticated it was tough to work with, and it nonetheless couldn’t deal with advanced situations, Fan says.

The MIT researchers’ controller was in a position to stop the jet from crashing or stalling whereas stabilizing to the purpose much better than any of the baselines.

Sooner or later, this system could possibly be a place to begin for designing controllers for extremely dynamic robots that should meet security and stability necessities, like autonomous supply drones. Or it could possibly be carried out as a part of bigger system. Maybe the algorithm is simply activated when a automobile skids on a snowy highway to assist the driving force safely navigate again to a secure trajectory.

Navigating excessive situations {that a} human wouldn’t be capable to deal with is the place their method actually shines, So provides.

“We imagine {that a} purpose we must always try for as a subject is to offer reinforcement studying the protection and stability ensures that we might want to present us with assurance after we deploy these controllers on mission-critical programs. We expect it is a promising first step towards attaining that purpose,” he says.

Shifting ahead, the researchers wish to improve their method so it’s higher in a position to take uncertainty into consideration when fixing the optimization. Additionally they wish to examine how nicely the algorithm works when deployed on {hardware}, since there can be mismatches between the dynamics of the mannequin and people in the actual world.

“Professor Fan’s group has improved reinforcement studying efficiency for dynamical programs the place security issues. As a substitute of simply hitting a purpose, they create controllers that make sure the system can attain its goal safely and keep there indefinitely,” says Stanley Bak, an assistant professor within the Division of Laptop Science at Stony Brook College, who was not concerned with this analysis. “Their improved formulation permits the profitable era of protected controllers for advanced situations, together with a 17-state nonlinear jet plane mannequin designed partly by researchers from the Air Drive Analysis Lab (AFRL), which includes nonlinear differential equations with raise and drag tables.”

The work is funded, partly, by MIT Lincoln Laboratory below the Security in Aerobatic Flight Regimes program.

MIT Information