Unlocking the entire insights hidden inside manufacturing information has the potential to reinforce effectivity, scale back prices and increase general productiveness for quite a few and various industries. Discovering insights inside manufacturing information is usually difficult, as a result of most manufacturing information exists as unstructured information within the type of paperwork, gear upkeep information, and information sheets. Discovering insights on this information to unlock enterprise worth is each a difficult and thrilling activity, requiring appreciable effort however providing vital potential influence.

AWS Industrial IoT providers, resembling AWS IoT TwinMaker and AWS IoT SiteWise, provide capabilities that enable for the creation of an information hub for manufacturing information the place the work wanted to realize insights can begin in a extra manageable approach. You’ll be able to securely retailer and entry operational information like sensor readings, essential paperwork resembling Commonplace Working Procedures (SOP), Failure Mode and Impact Evaluation (FMEA), and enterprise information sourced from ERP and MES programs. The managed industrial Data Graph in AWS IoT TwinMaker offers you the flexibility to mannequin advanced programs and create Digital Twins of your bodily programs.

Generative AI (GenAI) opens up new methods to make information extra accessible and approachable to finish customers resembling store ground operators and operation managers. Now you can use pure language to ask AI advanced questions, resembling figuring out an SOP to repair a manufacturing subject, or getting ideas for potential root causes for points primarily based on noticed manufacturing alarms. Amazon Bedrock, a managed service designed for constructing and scaling Generative AI purposes, makes it straightforward for builders to develop and handle Generative AI purposes.

On this weblog submit, we are going to stroll you thru use AWS IoT TwinMaker and Amazon Bedrock to construct an AI Assistant that may assist operators and different finish customers diagnose and resolve manufacturing manufacturing points.

Answer overview

We applied our AI Assistant as a module within the open-source “Cookie Manufacturing facility” pattern answer. The Cookie Manufacturing facility pattern answer is a totally customizable blueprint which builders can use to develop an operation digital twin tailor-made for manufacturing monitoring. Powered by AWS IoT TwinMaker, operations managers can use the digital twin to watch reside manufacturing statuses in addition to return in time to analyze historic occasions. We suggest watching AWS IoT TwinMaker for Good Manufacturing video to get a complete introduction to the answer.

Determine 1 reveals the parts of our AI Assistant module. We are going to deal with the Generative AI Assistant and skip the small print of the remainder of the Cookie Manufacturing facility answer. Please be at liberty consult with our earlier weblog submit and documentation if you happen to’d like an outline of your complete answer.

The Cookie Manufacturing facility AI Assistant module is a python utility that serves a chat person interface (UI) and hosts a Giant Language Mannequin (LLM) Agent that responds to person enter. On this submit, we’ll present you construct and run the module in your improvement surroundings. Please consult with the Cookie Manufacturing facility pattern answer GitHub repository for info on extra superior deployment choices; together with containerize our setup in order that it’s straightforward to deploy as a serverless utility utilizing AWS Fargate.

The LLM Agent is applied utilizing the LangChain framework. LangChain is a versatile library to assemble advanced workflows that leverage LLMs and extra instruments to orchestrate duties to reply to person inputs. Amazon Bedrock gives high-performing LLMs wanted to energy our answer, together with Claude from Anthropic and Amazon Titan. With a view to implement the retrieval augmented era (RAG) sample, we used an open-source in-memory vector database Chroma for improvement surroundings use. For manufacturing use, we’d encourage you to swap Chroma for a extra scalable answer resembling Amazon OpenSearch Service.

To assist the AI Assistant higher reply to the person’s area particular questions, we floor the LLMs through the use of the Data Graph characteristic in AWS IoT TwinMaker and person supplied documentation (resembling gear manuals saved in Amazon S3). We additionally use AWS IoT SiteWise to supply gear measurements, and a customized information supply applied utilizing AWS Lambda to get simulated alarm occasions information which might be used as enter to LLMs and generate subject analysis studies or troubleshooting ideas for the person.

A typical person interplay stream might be described as follows:

- The person requests the AI Assistant within the dashboard app. The dashboard app masses the AI Assistant chat UI within the

iframe. - The person sends a immediate to the AI Assistant within the chat UI.

- The LLM Agent within the AI Assistant determines the perfect workflow to reply the person’s query after which executes that workflow. Every workflow has its personal technique that may enable for using further instruments to gather contextual info and to generate a response primarily based on the unique person enter and the context information.

- The response is distributed again to the person within the chat UI.

Constructing and working the AI Assistant

Conditions

For this tutorial, you’ll want a bash terminal with Python 3.8 or larger put in on Linux, Mac, or Home windows Subsystem for Linux, and an AWS account. We additionally suggest utilizing an AWS Cloud9 occasion or an Amazon Elastic Compute Cloud (Amazon EC2) occasion.

Please first comply with the Cookie Manufacturing facility pattern answer documentation to deploy the Cookie Manufacturing facility workspace and assets. Within the following part, we assume you could have created an AWS IoT TwinMaker Workspace named CookieFactoryV3. <PROJECT_ROOT> refers back to the folder that incorporates the cookie manufacturing facility v3 pattern answer.

Working the AI Assistant

To run the AI Assistant in your improvement surroundings, full the next steps:

- Set the surroundings variables. Run the next command in your terminal. The

AWS_REGIONandWORKSPACE_IDought to match the AWS area you employ and AWS IoT TwinMaker workspace you could have created. - Set up the required dependencies. Run the next instructions in your present terminal.

- Launch the AI Assistant module. Run the next instructions in your present terminal.

As soon as the module is began, it can launch your default browser and open the chat UI. You’ll be able to shut the chat UI.

- Launch the Cookie Manufacturing facility dashboard app. Run the next instructions in your present terminal.

After the server is began, go to

https://localhost:8443to open the dashboard (see Determine 2).

Determine 2. A screenshot of the dashboard app reveals an overlook of the Bakersville manufacturing facility

AI Assisted subject analysis and troubleshooting

We ready an alarm occasion with simulated information to display how the AI Assistant can be utilized to help customers diagnose manufacturing high quality points. To set off the occasion, click on on the “Run occasion simulation” button on the navigation bar (see Determine 3).

The dashboard will show an alert, indicating there are greater than anticipated deformed cookies produced by one of many cookie manufacturing strains. When the alarm is acknowledged, the AI Assistant panel will open. The occasion particulars are handed to the AI Assistant so it has the context in regards to the present occasion. You’ll be able to click on the “Run Difficulty Analysis” button to ask AI to conduct a analysis primarily based on the collected info.

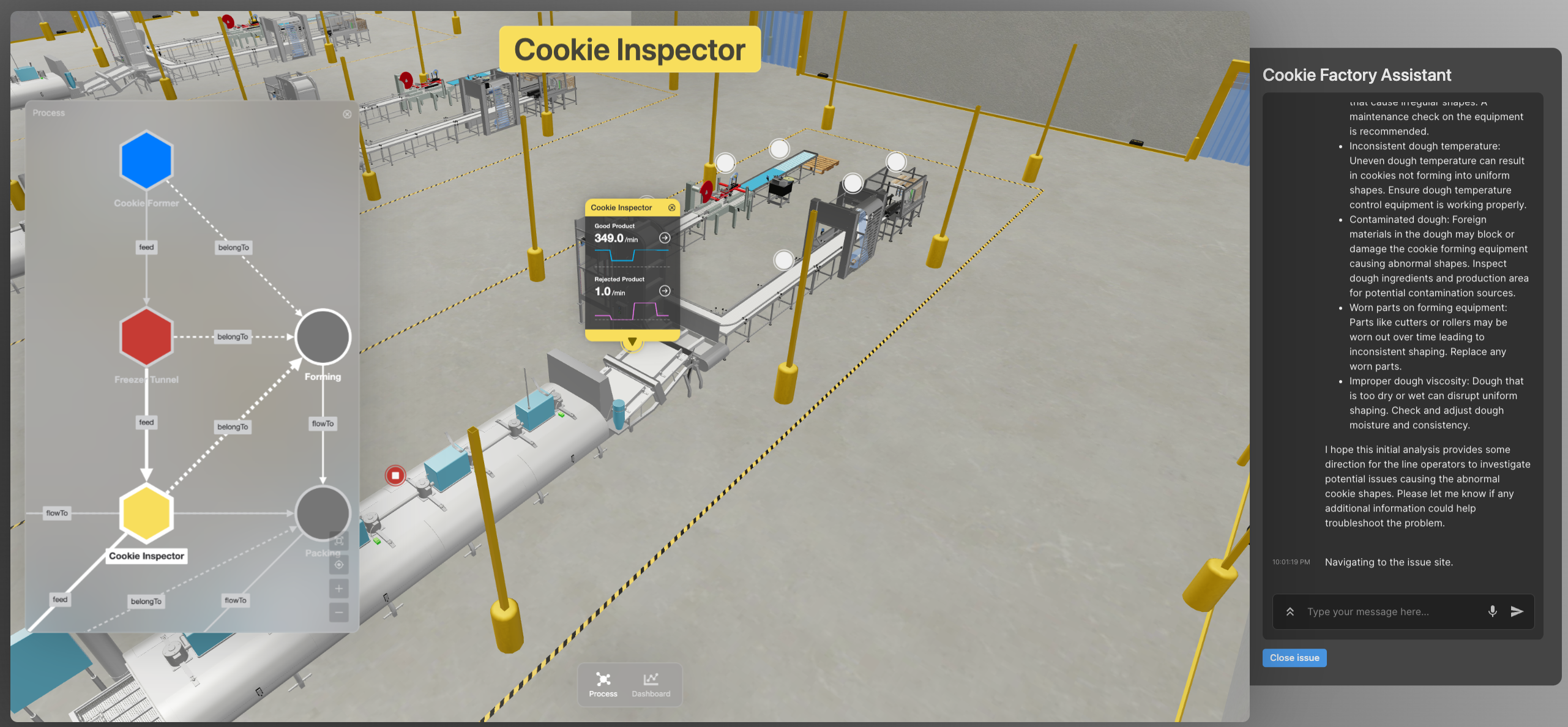

As soon as the analysis is accomplished, the AI Assistant will counsel just a few potential root causes and supply a button to navigate to the positioning of the problem within the 3D viewer. Clicking on the button will change the 3D viewer’s focus to the gear that triggers the problem. From there you should utilize the Course of View or 3D View to examine associated processes or gear.

Determine 5. AI Assistant reveals the positioning of the problem in 3D. Left panel reveals the associated gear and processes.

You should use the AI Assistant to search out SOPs of a specific gear. Attempt asking “ repair the temperature fluctuation subject within the freezer tunnel” within the chat field. The AI will reply the SOP discovered within the paperwork related to the associated gear and present hyperlinks to the unique paperwork.

Lastly, you’ll be able to click on the “Shut subject” button on the backside the panel to clear the occasion simulation.

Internals of the AI Assistant

The AI Assistant chooses completely different methods to reply a person’s questions. This permits it to make use of further instruments to generate solutions to real-world issues that LLMs can not resolve by themselves. Determine 6 reveals a high-level execution stream that represents how person enter is routed between a number of LLM Chains to generate a ultimate output.

The MultiRouteChain is the principle orchestration Chain. It invokes the LLMRouterChain to search out out the vacation spot chain that’s greatest suited to reply to the unique person enter. It then invokes the vacation spot chain with the unique person enter. When the response is distributed again to the MultiRouteChain, it post-processes it and returns the consequence again to the person.

We use completely different foundational fashions (FM) in numerous Chains in order that we will steadiness between inference value, high quality and pace to decide on the best FM for a specific use case. With Amazon Bedrock, it’s straightforward to change between completely different FMs and run experiments to optimize mannequin choice.

The GraphQueryChain is an LLM Chain that interprets pure language right into a TwinMaker Data Graph question. We use this functionality to search out details about the entities talked about within the person query in an effort to encourage LLMs to generate higher output. For instance, when the person asks “focus the 3D viewer to the freezer tunnel”, we use the GraphQueryChain to search out out what is supposed by “freezer tunnel”. This functionality can be used immediately to search out info within the TwinMaker Data Graph within the type of a response to a query like “record all cookie strains”.

The DomainQAChain is an LLM Chain that implements the RAG sample. It might reliably reply area particular query utilizing solely the knowledge discovered within the paperwork the person supplied. For instance; this LLM Chain can present solutions to questions resembling “discover SOPs to repair temperature fluctuation in freezer tunnel” by internalizing info present in person supplied documentation to generate a website particular context for solutions. TwinMaker Data Graph gives further context for the LLM Chain, resembling the placement of the doc saved in S3.

The GeneralQAChain is a fallback LLM Chain that tries to reply any query that can’t match a extra particular workflow. We are able to put guardrails within the immediate template to assist keep away from the Agent being too generic when responding to a person.

This structure is easy to customise and lengthen by adjusting the immediate template to suit your use case higher or configuring extra vacation spot chains within the router to present the Agent further expertise.

Clear up

To cease the AI Assistant Module, run the next instructions in your terminal.

Please comply with the Cookie Manufacturing facility pattern answer documentation to scrub up the Cookie Manufacturing facility workspace and assets.

Conclusion

On this submit, you discovered in regards to the artwork of the doable by constructing an AI Assistant for manufacturing manufacturing monitoring and troubleshooting. Builders can use the pattern answer we mentioned as a place to begin for extra specialised options that may greatest empower their prospects or customers. Utilizing the Data Graph supplied by AWS IoT TwinMaker gives an extensible structure sample to produce further curated info to the LLMs to floor their responses with the information. You additionally skilled how customers can work together with digital twins utilizing pure language. We consider this performance represents a paradigm shift for human-machine interactions and demonstrates how AI might help empower us all to do extra with much less by extracting data from information way more effectively and successfully than was doable beforehand.

To see this demo in motion, be certain that to attend Breakout Session IOT206 at re:Invent 2023 on Tuesday at 3:30 PM.

In regards to the authors

Jiaji Zhou is a Principal Engineer with deal with Industrial IoT and Edge at AWS. He has 10+ yr expertise in design, improvement and operation of large-scale information intensive net providers. His curiosity areas additionally embody information analytics, machine studying and simulation. He works on AWS providers together with AWS IoT TwinMaker and AWS IoT SiteWise.

Jiaji Zhou is a Principal Engineer with deal with Industrial IoT and Edge at AWS. He has 10+ yr expertise in design, improvement and operation of large-scale information intensive net providers. His curiosity areas additionally embody information analytics, machine studying and simulation. He works on AWS providers together with AWS IoT TwinMaker and AWS IoT SiteWise.

Chris Bolen is a Sr. Design Technologist with deal with Industrial IoT purposes at AWS. He makes a speciality of person expertise design and utility prototyping. He’s obsessed with working with industrial customers and builders to innovate and create pleasant person expertise for the shoppers.

Chris Bolen is a Sr. Design Technologist with deal with Industrial IoT purposes at AWS. He makes a speciality of person expertise design and utility prototyping. He’s obsessed with working with industrial customers and builders to innovate and create pleasant person expertise for the shoppers.

Johnny Wu is a Sr. Software program Engineer within the AWS IoT TwinMaker crew at AWS. He joined AWS in 2014 and labored on NoSQL providers for a number of years earlier than shifting into IoT providers. Johnny is obsessed with enabling builders to do extra with much less. He focuses on making it simpler for patrons to construct digital twins.

Johnny Wu is a Sr. Software program Engineer within the AWS IoT TwinMaker crew at AWS. He joined AWS in 2014 and labored on NoSQL providers for a number of years earlier than shifting into IoT providers. Johnny is obsessed with enabling builders to do extra with much less. He focuses on making it simpler for patrons to construct digital twins.

Julie Zhao is a Senior Product Supervisor on Industrial IoT at AWS. She joined AWS in 2021 and brings three years of startup expertise main merchandise in Industrial IoT. Previous to startups, she spent over 10 years in networking with Cisco and Juniper throughout engineering and product. She is obsessed with constructing merchandise in Industrial IoT.

Julie Zhao is a Senior Product Supervisor on Industrial IoT at AWS. She joined AWS in 2021 and brings three years of startup expertise main merchandise in Industrial IoT. Previous to startups, she spent over 10 years in networking with Cisco and Juniper throughout engineering and product. She is obsessed with constructing merchandise in Industrial IoT.