Picture by Creator

Whenever you thought you had heard sufficient information about Massive Language Fashions (LLMs), Microsoft Analysis has come out to disturb the market once more. In June 2023, Microsoft Analysis launched a paper known as “Textbooks is All You Want,” the place they launched phi-1, a brand new giant language mannequin for code. phi-1 is a transformer-based mannequin with 1.3B parameters, which was skilled for 4 days on 8 A100s GPUs, which used a choice of “textbook high quality” knowledge from the online.

It looks as if LLMs are getting smaller and smaller.

Now Microsoft Analysis introduces to you phi-1.5, a Transformer with 1.3B parameters, which was skilled utilizing the identical knowledge sources as phi-1. As said above, phi-1 was skilled on high-quality textbook knowledge, whereas phi-1.5 was skilled on artificial knowledge solely.

phi-1.5 used 32xA100-40G GPUs and was efficiently skilled in 8 days. The purpose behind phi-1.5 was to craft an open-source mannequin that may play a job within the analysis neighborhood utilizing a non-restricted small mannequin which lets you discover the totally different security challenges with LLMs, akin to lowering toxicity, enhancing controllability, and extra.

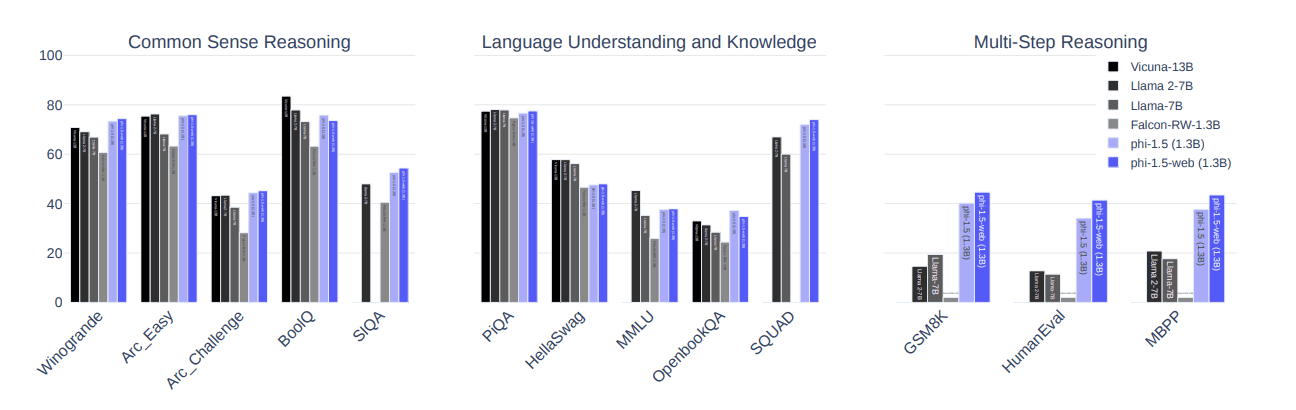

Through the use of the ‘Artificial Information Era’ strategy, phi-1.5 efficiency is equal to fashions which might be 5x bigger on pure language checks and has been proven to outperform most LLMs on harder reasoning duties.

Fairly spectacular proper?

The mannequin’s studying journey could be very fascinating. It attracts knowledge from a wide range of sources, together with Python code snippets from StackOverflow, artificial Python textbooks as properly workouts that had been generated by GPT-3.5-turbo-0301.

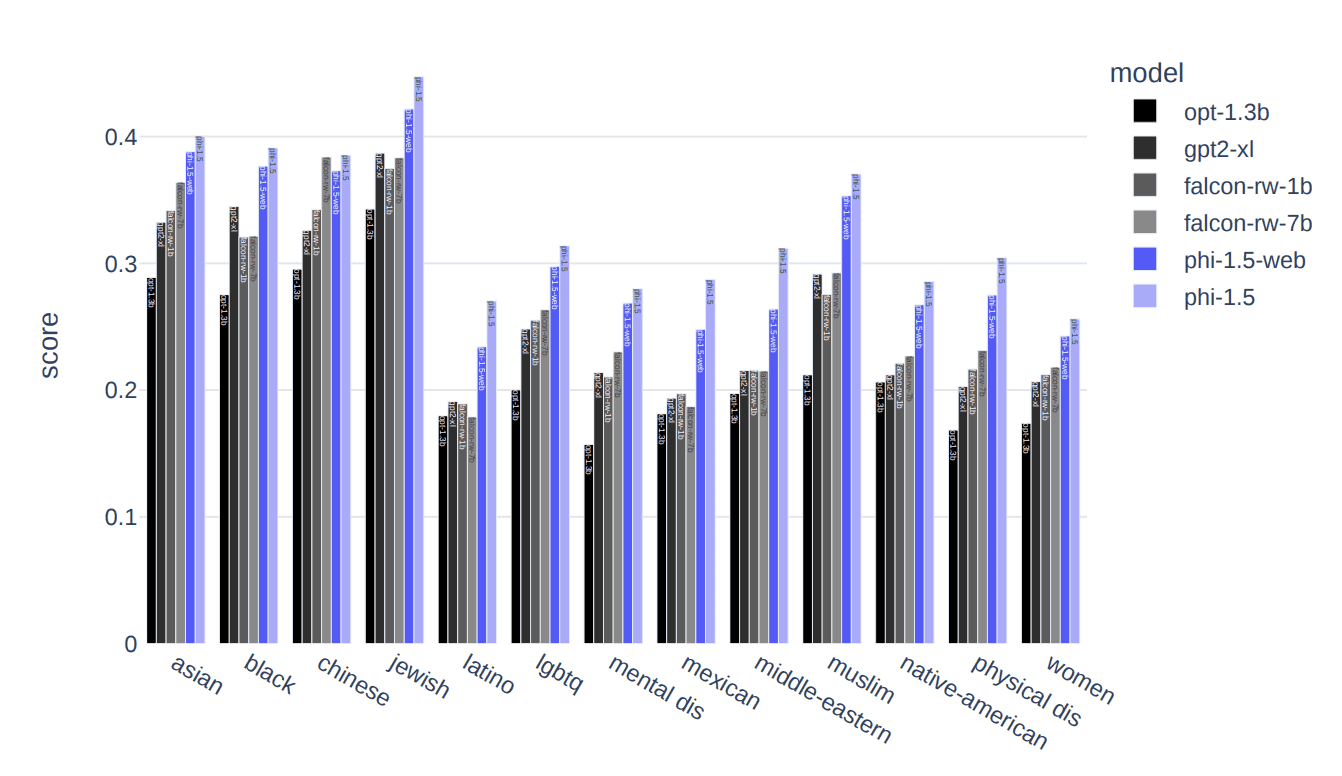

One of many main challenges with LLMs is toxicity and biased content material. Microsoft Analysis aimed to beat this ongoing problem of dangerous/offensive content material and content material that promotes a selected ideology.

The artificial knowledge used to coach the mannequin generated responses with a decrease propensity for producing poisonous content material compared to different LLMs akin to Falcon-7B and Llama 2–7B, as proven within the picture under:

Picture by way of Textbooks Are All You Want II: phi-1.5 technical report

The picture under reveals how phi-1.5 carried out barely higher than state-of-the-art fashions, akin to Llama 2–7B, Llama-7B, and Falcon-RW-1.3B) on 3 benchmarks: frequent sense reasoning, language expertise, and multi-step reasoning.

Picture by way of Textbooks Are All You Want II: phi-1.5 technical report

How was this carried out?

The usage of textbook-like knowledge differentiated the usage of such knowledge in LLMs compared to knowledge extracted from the web. To additional assess how the mannequin offers with poisonous content material, ToxiGen was used as properly 86 prompts had been designed and manually labeled ‘go’, ‘fail’ or ‘didn’t perceive’ to get a greater understanding of the mannequin’s limitations.

With this being mentioned, phi-1.5 handed 47 prompts, failed 34 prompts and didn’t perceive 4 prompts. The HumanEval strategy to evaluate the fashions generates responses exhibiting that phi-1.5 scored greater compared to different well-known fashions.

Listed here are the foremost speaking factors it’s best to take away from right here concerning phi-1.5:

- Is a transformer-based mannequin

- Is a LLM that focuses on next-word prediction aims

- Was skilled on 30 billion tokens

- Used 32xA100-40G GPUs

- Was efficiently skilled in 8 days

Nisha Arya is a Information Scientist, Freelance Technical Author and Group Supervisor at KDnuggets. She is especially excited by offering Information Science profession recommendation or tutorials and concept based mostly data round Information Science. She additionally needs to discover the other ways Synthetic Intelligence is/can profit the longevity of human life. A eager learner, in search of to broaden her tech data and writing expertise, while serving to information others.