|

Take heed to this text |

A analysis challenge led by USC laptop science scholar Sumedh A. Sontakke needs to open the door for robots to be caregivers for getting old populations. The group claims the RoboCLIP algorithm, developed with assist from Professor Erdem Biyik and Professor Laurent Itti, permits robots to carry out new duties after only one demonstration.

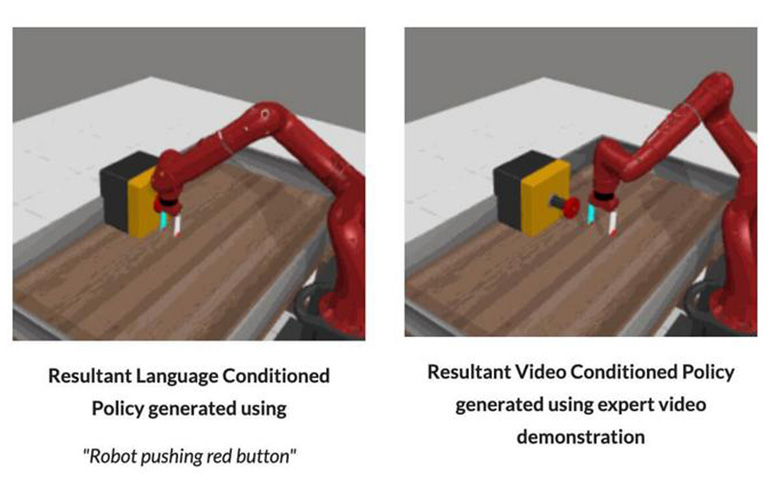

RoboCLIP solely must see one video or textual demonstration of a process for it to carry out the duty two or thrice higher than different imitation studying (IL) fashions, the group claimed.

“To me, probably the most spectacular factor about RoboCLIP is having the ability to make our robots do one thing primarily based on just one video demonstration or one language description,” mentioned Biyik, a roboticist who joined USC Viterbi’s Thomas Lord Division of Pc Science in August 2023 and leads the Studying and Interactive Robotic Autonomy Lab (Lira Lab).

The challenge began two years in the past when Sontakke realized how a lot knowledge is required to have robots carry out primary family duties.

“I began occupied with family duties like opening doorways and cupboards,” Sontakke mentioned. “I didn’t like how a lot knowledge I wanted to gather earlier than I might get the robotic to efficiently do the duty I cared about. I needed to keep away from that, and that’s the place this challenge got here from.”

How does RoboCLIP work?

Most IL fashions discover ways to full duties by trial and error. The robotic performs the duty time and again to get a reward when it lastly completes the duty. Whereas this may be efficient, it requires large quantities of time, knowledge, and human supervision to get the robotic to efficiently carry out a brand new process.

“The big quantity of knowledge presently required to get a robotic to efficiently do the duty you need it to do isn’t possible in the true world, the place you need robots that may study rapidly with few demonstrations,” Sontakke mentioned in a launch.

RoboCLIP works otherwise than typical IL fashions, because it incorporates the most recent advances in generative AI and video-language fashions (VLMs). These techniques are pre-trained on massive quantities of video and textual demonstrations, in accordance with Biyik.

The researchers claimed RoboCLIP performs nicely out of the field to carry out family duties, like opening and shutting drawers or cupboards.

“The important thing innovation right here is utilizing the VLM to critically ‘observe’ simulations of the digital robotic babbling round whereas attempting to carry out the duty, till in some unspecified time in the future it begins getting it proper – at that time, the VLM will acknowledge that progress and reward the digital robotic to maintain attempting on this route,” Itti mentioned.

In accordance with Itti, the VLM can inform it’s getting nearer to success when the textual description it creates observing the robotic comes nearer to what the consumer needs.

“This new form of closed-loop interplay may be very thrilling to me and can seemingly have many extra future functions in different domains,” Itti mentioned.

Submit your nominations for innovation awards within the 2024 RBR50 awards.

Submit your nominations for innovation awards within the 2024 RBR50 awards.

What’s subsequent?

Sontakke hopes that this system might sometime assist robots take care of getting old populations, or result in different functions that might assist anybody. The group says that future analysis can be crucial earlier than the system is able to tackle the true world.

The paper, titled RoboCLIP: One Demonstration is Sufficient to Be taught Robotic Insurance policies, was offered by Sontakke on the thirty seventh Convention on Neural Info Processing Methods (NeurIPS), Dec. 10-16 in New Orleans.

Collaborating with Sontakke, Biyik and Itti on the RoboCLIP paper had been two USC Viterbi graduates, Sebastien M.R. Arnold, now at Google Analysis, and Karl Pertsch, now at UC Berkeley and Stanford College. Jesse Zhang, a fourth-year Ph.D. candidate in laptop sciences at USC Viterbi, additionally labored on the RoboCLIP challenge.